Consortium for Electric Reliability Technology Solutions

Grid of the Future

White Paper on

Review of Recent Reliability Issues and System Events

Prepared for the

Transmission Reliability Program

Office of Power Technologies

Assistant Secretary for Energy Efficiency and Renewable Energy

U.S. Department of Energy

Prepared by

John F. Hauer

Jeff E. Dagle

Pacific Northwest National Laboratory

December 9, 1999

The work described in this report was funded by the Assistant Secretary of Energy Efficiency and Renewable Energy, Office of Power Technologies of the U.S. Department of Energy under Contract No. DE-AC03-76SF00098.

Consortium for Electric Reliability Technology Solutions

Grid of the Future

White Paper on

Review of Recent Reliability Issues and System Events

Executive Summary *

1. Introduction *

2. Preliminary Remarks *

3. Overview of Major Electrical Outages in North America *

3.1 Northeast Blackout: November 9-10, 1965 [18] *

3.2 New York City Blackout: July 13-14, 1977 [19] *

3.3 Recent Western Systems Coordinating Council (WSCC) Events *

3.3.1 WSCC Breakup (earthquake): January 17, 1994 [] *

3.3.2 WSCC Breakup: December 14, 1994 [21] *

3.3.3 WSCC Breakup: July 2, 1996 [,,] *

3.3.4 WSCC "Near Miss:" July 3, 1996 [30,13] *

3.3.5 WSCC Breakup: August 10, 1996 [9,,12,13] *

3.4 Minnesota-Wisconsin Separation and "Near Miss:" June 11-12, 1997 [,] *

3.5 MAPP Breakup: June 25, 1998 [,] *

3.6 NPCC Ice Storm: January 5-10, 1998 [] *

3.7 San Francisco Tripoff: December 8, 1998 [] *

3.8 "Price Spikes" in the Market *

3.9 The Hot Summer of 1999 *

4. The Aftermath of Major Disturbances *

5. Recurring Factors in North American Outages *

5.1 Protective Controls — Relays *

5.2 Protective Controls — Relay Coordination *

5.3 Unexpected Circumstances *

5.4 Circumstances Unknown to System Operators *

5.5 Understanding Power System Phenomena *

5.6 Challenges in Feedback Control *

5.7 Maintenance Problems, RCM, and Intelligent Diagnosticians *

5.8 "Operator Error" *

6. Special Lessons From Recent Outages — August 10, 1996 *

6.1 Western System Oscillation Dynamics *

6.2 Warning Signs of Pending Instability *

6.3 Stability Control Issues *

6.4 The Issue of Model Validity *

6.5 System Planning Issues *

6.6 Institutional Issues — the WSCC *

6.7 Institutional Issues — the Federal Utilities and WAMS *

7. Focus Areas for DOE Action *

8. Summary of Findings and Implications *

Executive Summary

The objective of this White Paper is to review, analyze, and evaluate critical reliability issues as demonstrated by recent disturbance events in the North America power system. The system events are assessed for both their technological and their institutional implications. Policy issues are noted in passing, in so much as policy and policy changes define the most important forces that shape power system reliability on this continent.

Eleven major disturbances are examined. Most of them occurred in this decade. Two earlier ones — in 1965 and 1977 — are included as early indictors of technical problems that persist to the present day. The issues derived from the examined events are, for the most part, stated as problems and functional needs. Translating these from the functional level into explicit recommendations for Federally supported RD&D is reserved for CERTS White Papers that draw upon the present one.

The strategic challenge is that the pattern of technical need has persisted for so long. Anticipation of market deregulation has, for more than a decade, been a major disincentive to new investments in system capacity. It has also inspired reduced maintenance of existing assets. A massive infusion of better technology is emerging as the final option for continued reliability of electrical services. If that technology investment will not be made in a timely manner, then that fact should be recognized and North America should plan its adjustments to a very different level of electrical service.

It is apparent that technical operations staff among the utilities can be very effective at marshaling their forces in the immediate aftermath of a system emergency, and that serious disturbances often lead to improved mechanisms for coordinated operation. It is not at all apparent that such efforts can be sustained through voluntary reliability organizations in which utility personnel external to those organizations do most of the technical work. The eastern interconnection shows several situations in which much of the technical support has migrated from the utilities to the Independent System Operator (ISO), and the ISO staffs or shares staff with the regional reliability council. This may be a natural and very positive consequence of utility restructuring. If so, the fact should be recognized and the process should be expedited in regions where the process is less advanced.

The August 10, 1996 breakup of the Western interconnection demonstrates the problem. It is clear that better technology might have avoided this disturbance, or at least minimized its impact. The final message is a broader one. All of the technical problems that the Western Systems Coordinating Council (WSCC) identified after the August 10 Breakup had been progressively reported to it in earlier years, along with an expanded version of the countermeasures eventually adopted. Through a protracted decline in planning resources among the member utilities, the WSCC had lost its collective memory of these problems and much of the critical competency needed to resolve them. The market forces that caused this pervade all of North America. Similar effects should be expected in other regions as well, though the symptoms will vary.

Hopefully, such institutional weaknesses are a transitional phenomenon that will be remedied as new organizational structures for grid operations evolve, and as regional reliability organizations acquire the authority and staffing consistent with their expanding missions. This will provide a more stable base and rationale for infrastructure investments. Difficult issues still remain in accommodating risk and in reliability management generally. Technology can provide better tools, but it is National policy that will determine if and how such tools are employed. That policy should consider the deterrent effect that new liability issues pose for the pathfinding uses of new technology or new methods in a commercially driven market.

The progressive decline of reliability assets that preceded many of these reliability events, most notably the 1996 breakups of the Western system, did not pass unnoticed by the Federal utilities and by other Federal organizations involved in reliability assurance. Under an earlier Program, the DOE responded to this need through the Wide Area Measurement System (WAMS) technology demonstration project. This was of great value for understanding the breakups and restoring full system operations. The continuing WAMS effort provides useful insights into possible roles for the U.S. Department of Energy (DOE) and for the Federal utilities in reliability assurance.

To be fully effective in such matters the DOE should probably seek closer "partnering" with operating elements of the electricity industry. This can be approached through greater involvement of the Federal utilities in National Laboratory activities, and through direct involvement of the National Laboratories in support of all utilities or other industry elements that perform advanced grid operations. The following activities are proposed as candidates for this broader DOE involvement:

- National Institute for Energy Assurance (NIEA) to safeguard, integrate, focus, and refine critical competencies in the area of energy system reliability. The NIEA will be organized as a distributed "virtual organization" based upon the Department of Energy and its National Laboratories, the Federal Utilities, and energy industry groups such as the Electric Power Research Institute and the Gas Research Institute. The NIEA will provide coordination with universities and other industry organizations, and provide collaborative linkages with other professional organizations and the vendor community. The NIEA will expedite sharing and transfer of technology, knowledge, and skills developed within the Federal system. Electric utilities, grid operators, and reliability organizations such as NERC/NAERO will be supported by the NIEA as needed, and through the formation of Emergency Response Teams during unusual system emergencies.

- Dynamic Information Network (DInet) for reliable planning and operation. An advanced demonstration project building upon the earlier DOE/EPRI Wide Area Measurement System effort, plus Federal technologies for data mining, visualization, and advanced computing. Core technologies also include centralized phasor measurements, mathematical system theory, advanced signal analysis, and secure distributed information processing. The DInet itself will provide a testbed for new technology, plus information support to wide area control projects and the evolving Interregional Security Network. Focus issues for this program include direct examination and assessment of power system dynamic performance, systematic validation and refinement of computer models, and sharing of WAMS technologies developed for these purposes.

- Modeling the Public Good in Reliability Management. Exploratory research into means for representing National interests as objectives and/or constraints in the emerging generation of decision support tools for reliability management. Examples of National interests include an effective power grid for the deregulated U.S. power markets and a secure, resilient grid to protect the national interests in an increasingly digital economy. The key technical product will be a global framework for reliability management that incorporates a full range of technical, social and economic issues. Elements within this framework include determining and quantifying the full impact of reliability failures, probabilistic indicators for risk, treatment of mandates and subjective preferences toward options, mathematical modeling, and decision algorithms. To test and evaluate the principles involved, this research may include joint demonstration projects with EPRI or other developers of probabilistic tools.

- Recovery Systems for Disturbance Mitigation, to lessen the impact of system disturbances and to lessen the dependence upon preventive measures. Dynamic restoration controls, based upon real time phasor information, would reduce the violence of the event itself and steer the system toward automatic reclosure of open transmission elements. This might include temporary separation of the system into islands that are linked by HVDC or FACTS devices. If needed, operators would continue the process and restore customer services on a prioritized basis. Comprehensive information systems (advanced WAMS) would expedite the engineering analysis and repair processes needed to fully restore power system facilities.

All of these activities would take place at the highest strategic level, and in areas that commercial market activities are unlikely to address.

- Introduction

This White Paper is one of six developed under the U.S. Department of Energy (DOE) Program in Power System Integration and Reliability (PSIR). The work is being performed by or in coordination with the Consortium for Electrical Reliability Technology Solutions (CERTS), under the Grid of the Future Task.

The objective of this particular White Paper is to review, analyze, and evaluate critical reliability issues as demonstrated by recent disturbance events in the North America power system. The lead institution for this White Paper is the DOE’s Pacific Northwest National Laboratory (PNNL). The work is performed in the context of reports issued by the U.S. Secretary of Energy Advisory Board (SEAB) [,], and it builds upon earlier findings drawn from the DOE Wide Area Measurement Systems Project [,,]. Related information can also be found in the Final Report of the Electric Power Research Institute (EPRI) WAMS Information Manager Project [].

The system events are assessed for both their technological and their institutional implications. Some of the more recent events reflect new market forces. Consequently, they may also reflect upon the changing policy balance between reliability assurance and open market competition. This balance is considered here from a historical perspective, and only to the extend necessary for event assessment.

The White Paper also makes brief mention of a different kind of reliability event that was very conspicuous across eastern North America during the summer of 1999. These represented shortages in energy resources, rather than main-grid disturbances. Even so, they reflect many of the same underlying reliability issues. These are being examined under a separate activity, conducted by a Post-Outage Study Team (POST) established by DOE Energy Secretary Richardson [].

Primary contributions of this White Paper include the following:

- Summary descriptions of the system events, with bibliographies

- Recurring factors in these events, presented as technical needs

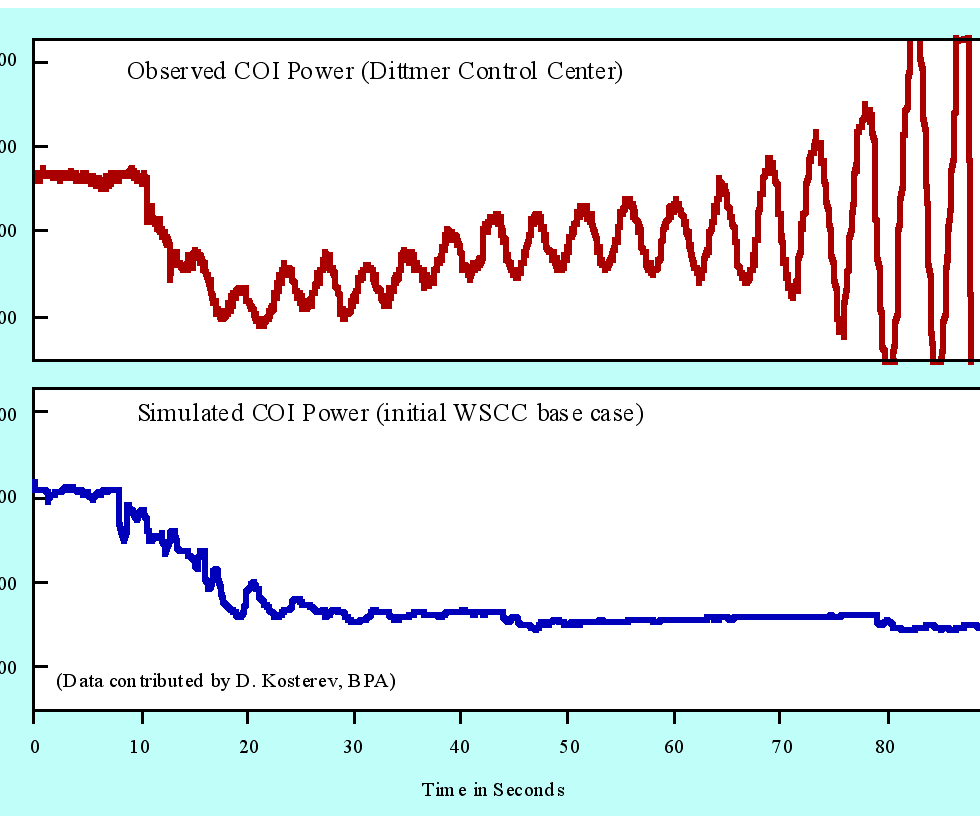

- Results showing how better information technology would have warned system operators of impending oscillations on August 10, 1996

- The progression by which market forces eroded WSCC capability to anticipate and avoid the August 10 breakup

- The progression by which market forces eroded the ability of the Bonneville Power Administration (BPA), and other Federal utilities, to sustain their roles as providers of reliability services and technology

- "Lessons learned" during critical infrastructure reinforcement by the DOE WAMS Project.

Various materials are also provided as background, or for possible use in related documents within the Project. The issues derived from the examined events are, for the most part, stated as problems and functional needs. Translating these from the functional level into explicit recommendations for Federally supported RD&D is reserved for a subsequent CERTS effort.

- Preliminary Remarks

Some comments are in order as to the approach followed in this White Paper. The authors are well aware of the risk that too much — or too little — might be inferred from what may seem to be just anecdotal evidence. It is important to consider not only what happened, but also why it happened and the degree to which effective countermeasures have since been established. New measurement systems, developed and deployed expressly for such purposes, recorded the WSCC breakups of 1996 in unusual detail [,,]. The information thus acquired provided a basis for engineering reviews that were more detailed and more comprehensive than are usually possible [,,,,,]. In addition to this, the lead author was deeply involved in an earlier and very substantial BPA/WSCC efforts to clarify and reduce the important planning uncertainties that later contributed to the 1996 breakups [,,]. The total information base for assessing these events is extensive, though important gaps remain. Some of the finer details, concerning matters such as control system behavior and the response of system loads, are not certain and they may never be fully resolved.

There are also some caveats to observe in translating WSCC experience to other regions. The salient technical problems on any large power system are often unique to just that system. The factors that determine this include geography, weather, network topology, generation and load characteristics, age of equipment, staff resources, maintenance practices, and many others. The western power system is "loosely connected," with a nearly longitudinal "backbone" for north-south power exchanges. Many of the generation centers there are very large, and quite remote from the loads they serve. In strong contrast to this, most of eastern North America is served by a "tightly meshed" power system in which transmission distances are far shorter. Differences in the problems that engineers face on these systems differ more in degree than in kind, however. Oscillation problems that plague the west are becoming visible in the east, and the voltage collapse problem has migrated westward since the great blackouts of 1965 and 1977 [,,]. Problems on any one system can very well point to future problems on other systems.

It is also important to assess large and dramatic reliability events within the overall context of observed system behavior. The WSCC breakup on July 2 followed almost exactly the same path as a breakup some 18 months earlier []. Some of the secondary problems from July 2 carried over to the even bigger breakup on August 10, and were important contributors to the cascading outage. The August 10 event was much more complex in its details and underlying causes, however. It was in large part a result of planning models that overstated the safety factor in high power exports from Canada, compounded by deficiencies in generator control and protection [,]. Symptoms of these problems were provided by many smaller disturbances over the previous decade, and by staged tests that BPA and WSCC technical groups had performed to correct the modeling situation [,].

In the end event, the WSCC breakups of 1996 were the consequence of known problems that had persisted for too long []. One reason for this was the fading of collective WSCC memory through staff attrition among the member utilities. A deeper reason was that "market signals" had triggered a race to cut costs, with reduced attention to overall system reliability. Technical support to the WSCC mission underwent a protracted decline among the utilities, with a consequent weakening of staffing and leadership. Many needed investments in reliability technologies were deferred to future grid operators.

The pattern of disturbances and other power system emergencies argues that the same underlying forces are at work across all of North America. At first inspection and at the lowest scale of detail, the ubiquitous relay might seem the villain in just about all of the major disturbances since 1965 []. Looking deeper, one may find that particular relays are obsolescent or imperfectly maintained, that relay settings and "intelligence" do not match the present range of operating conditions, and that coordinating wide area relay systems is an imperfect art. Ways to remedy these problems can be developed [,], but rationalization of that development must also make either a market case or a regulatory case for deployment of the product by the electricity industry.

At the highest scale of detail, system emergencies in which generated power is not adequate to serve customer load seem to have become increasingly common. Allegations have been made that some of these scarcities have been created or manipulated to produce "price spikes" in the spot market for electricity. Whether this can or does happen is important to know but difficult to establish. Even here better technology may provide at least partial remedies. There is an obvious role for better assets management tools, such as Flexible AC Transmission System (FACTS) technologies, to relieve congestion in the energy delivery system (to the extent that such a problem does indeed exist as a separable factor []). More abstractly, systems for "data mining" may be able to recognize market manipulations and operations research methodology might help to develop markets that are insensitive to such manipulations. This is a zone in which the search for solutions crosses from technology into policy.

Somehow, the electricity industry itself must be able to rationalize continued investments in raw generation and in all the technologies that are needed to reliably deliver quality power to the consumer [,]. Some analysts assert that reliability is a natural consequence and a salable commodity at the "end state" of the deregulatory process. While this could prove true, eventually, the transition to that end state may be protracted and uncertain. It may well be that the only mechanism to assure reliability during the transition itself is that provided by the various levels of government acting in the public interest.

A final caveat is that utility engineers are rather more resourceful than outside observers might realize. It can be very difficult to track or assist utility progress toward some technical need without being directly involved. So, before too many conclusions are drawn from this White Paper, CERTS should develop a contemporary estimate as to just how much has already been done — and how well it fits into the broader picture. It might be useful to circulate selected portions of the White Papers for comment among industry experts who are closely familiar with the subject matter.

Relevance and focus of the CERTS effort will, over the longer term, require sustained dialog with operating utilities. As field arms of the DOE, and through their involvement in reliability assurance, the Power Marketing Agencies are good candidates for this. It is highly desirable that the dialog not be restricted to just a few such entities, however.

- Overview of Major Electrical Outages in North America

This Section provides summary descriptions for the following electrical outages in North America:

- Northeast Blackout: November 9-10, 1965

- New York City Blackout: July 13-14, 1977

- WSCC Breakup (earthquake): January 17, 1994

- WSCC Breakup: December 14, 1994

- WSCC Events in Summer 1996

- July 2, 1996 — cascading outage

- July 3, 1996 — cascading outage avoided

- August 10, 1996 — cascading outage

- Minnesota-Wisconsin Separation: June 11-12, 1997

- MAPP Breakup: June 25, 1998

- NPCC Ice Storm: January 5-10, 1998

- San Francisco Tripoff: December 8, 1998

Each of these disturbances contains valuable information about the management and assurance of power system reliability. More detailed descriptions can be found by working back through the indicated references. In many cases these will also describe system restoration, which can be more complex and provide more insight into needed improvements than the disturbance itself. Together, it is not unusual for a disturbance plus restoration to involve several hundred system operations. Some of these may not be accurately recorded, and a few may not be recorded at all.

- Northeast Blackout: November 9-10, 1965 []

This event began with sequentially tripping of five 230 kV lines transporting power from the Beck plant (on the Niagara River) to the Toronto, Ontario load area. The tripping was caused by backup relays that, unknown to the system operators, were set at thresholds below the unusually high but still safe line loadings of recent months. These loadings reflected higher than normal imports of power from the United States into Canada, to cover emergency outages of the nearby Lakeview plant. Separation from the Toronto load produced a "back surge" of power into the New York transmission system, causing transient instabilities and tripping of equipment throughout the northeast electrical system. This event directly affected some 30 million people across an area of 80,000 square miles. That it began during a peak of commuter traffic (5:16 p.m. on a Tuesday) made it especially disruptive.

This major event was a primary impetus for foundation of the North American Electric Reliability Council (NERC) and, somewhat later, of the Electric Power Research Institute (EPRI).

- New York City Blackout: July 13-14, 1977 []

A lightning stroke initiated a line trip which, through a complex sequence of events, lead to total voltage collapse and blackout of the Consolidated Edison system some 59 minutes later (9:36 p.m.). The 9 million inhabitants of New York City were to be without electrical power for some 25 hours. Impact of this blackout was greatly exacerbated by widespread looting, arson, and violence. Disruption of public transportation and communications was massive, and the legal resources were overwhelmed by the rioting. Estimated financial cost of this event is in excess of 350 million dollars, to which many social costs must be added.

Several aspects of this event were exceptional for that time. One of these was the very slow progression of the voltage collapse. Another was the considerable damage to equipment during re-energization. This is one of the "benchmark" events from which the electricity industry has drawn many lessons useful to the progressive interconnection of large power systems.

- Recent Western Systems Coordinating Council (WSCC) Events

For reasons stated earlier, special attention is given to the WSCC breakups in the summer of 1996. This is part of a series (shown in Table I) that has received a great deal of attention from the public, the electricity industry, and various levels of government. In part this is because the events themselves were very conspicuous. The August 10 Breakup affected some 7.5 million people across a large portion of North America, and is estimated to have cost the economy at least 2 billion dollars. There is also a great deal of dramatic impact to news images of the San Francisco skyline in a night without lights.

Table I. Topical outages in the western power system, 1994-1998

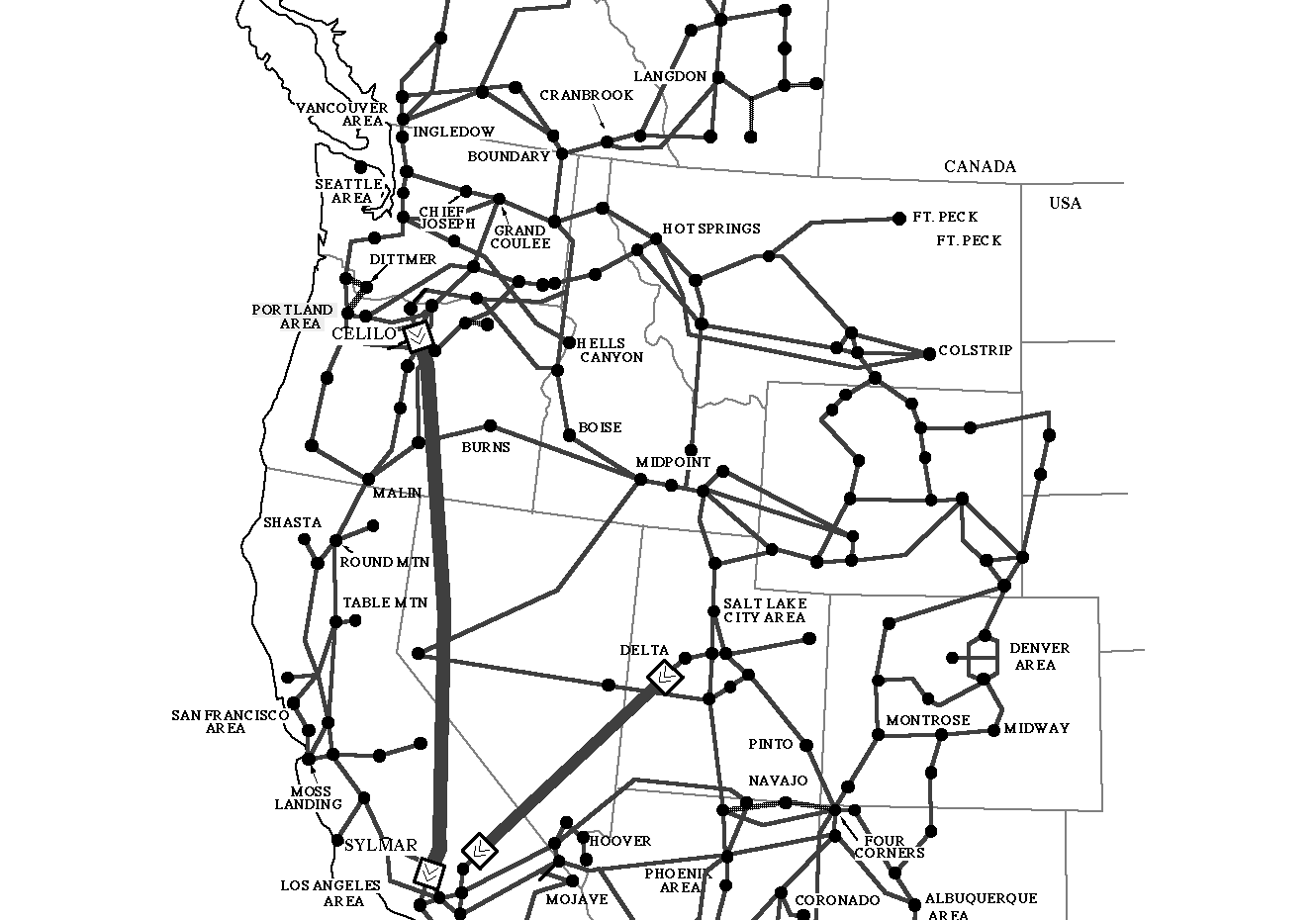

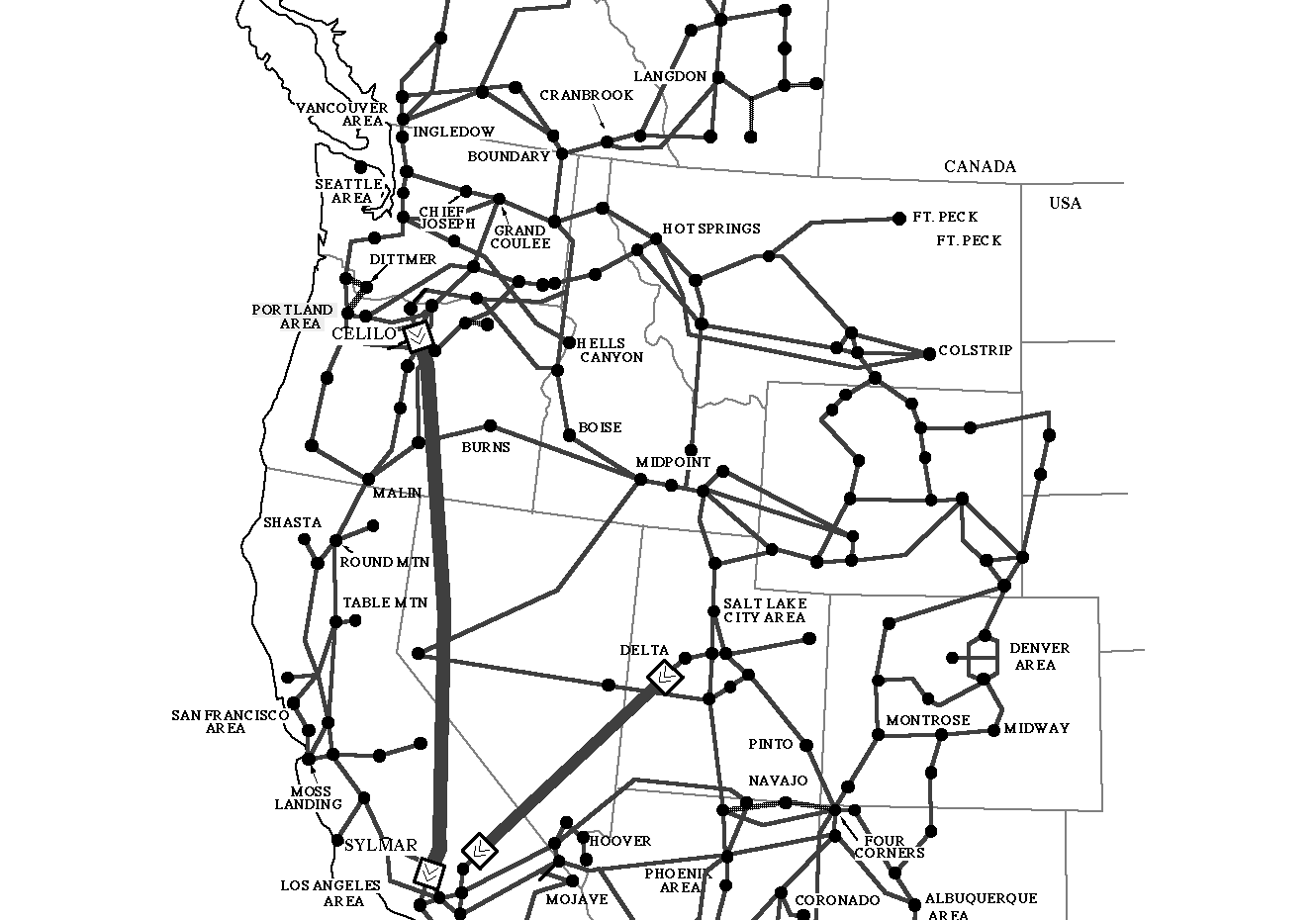

The more severe of the WSCC breakups were true "cascading outages," in which events at many different locations contributed to final failure. The map shown in Fig. 1 shows the more important locations mentioned in the descriptions to follow.

Fig. 1. General structure of the western North America power system.

- WSCC Breakup (earthquake): January 17, 1994 []

At 04:31 a.m. a magnitude 6.6 earthquake occurred in the vicinity of Los Angeles, CA. Damage to nearby electrical equipment was extensive, and some relays tripped through mechanical vibrations. Massive loss of transmission resources triggered a rapid breakup of the entire western system. Disruption in the Pacific Northwest was considerably reduced through first-time operation of underfrequency load shedding controls [], which operated through 2 of their 7 levels. There was considerable surprise among the general public, and in some National policy circles, that an earthquake in southern California would immediately impact electrical services so far away as Seattle and western Canada.

- WSCC Breakup: December 14, 1994 []

The Pacific Northwest was in a winter import condition, bringing about 2500 MW from California and about 3100 MW from Idaho plus Montana. Import from Canada into the BPA service area totaled about 1100 MW. At 01:25 a.m. local time, insulator contamination near Borah (in SE Idaho) faulted one circuit on a 345 kV line importing power from the Jim Bridger plant (in SW Wyoming). The circuit tripped properly, but another relay erroneously tripped a parallel circuit; bus geometry at Borah the forced a trip of the direct 345 kV line from Jim Bridger. Sustained voltage depression and overloads tripped other nearby lines at 9, 41, and 52 seconds after the original fault. The outage then cascaded throughout the western system, thorough transient instability and protective actions. The western power system fragmented into 4 islands a few seconds later.

Extreme swings in voltage and frequency produced widespread generator tripping. Responding to these swings, various controls associated with the Intermountain Power Project (IPP) HVDC line, from Utah to Los Angeles, cycled its power from 1678 to 2050 to 1630 to 2900 to 0 MW. This considerably aggravated an already complex problem. Slow frequency recovery in some islands indicated that governor response was not adequate. Notably, the Pacific Northwest load shedding controls operated through 6 of their 7 levels.

- WSCC Breakup: July 2, 1996 [,,]

Hot weather had produced heavy loads throughout the west. Abundant water supplies powered fairly heavy imports of energy from Canada (about 1850 MW) and through the BPA service area into California. Despite the high stream flow, environmental mandates forced BPA to curtail generation on the lower Columbia River as an aid to fish migration. This reduced both voltage support and "flywheel" support for transient disturbances, in an area where both the Pacific AC Intertie (PACI) and the Pacific HVDC Intertie (PDCI) originate. This threatened the ability of those lines to sustain heavy exports to California, and — with the northward shift of the generation center — it increased system exposure to north-south oscillations (Canada vs. Southern California and Arizona). The power flow also involved unusual exports from the Pacific Northwest into southern Idaho and Utah, with Idaho voltage support reduced by a maintenance outage of the 250 MVA Brownlee #5 generator near Boise.

At 02:24 p.m. local time, arcing to a tree tripped a 345 kV line from the Jim Bridger plant (in SW Wyoming) into SE Idaho. Relay error also tripped a parallel 345 kV line, initiating trip of two 500 MW generators by stability controls. Inadequate reserves of reactive power produced sustained voltage depression in southern Idaho, accompanied by oscillations throughout the Pacific Northwest and northern California. About 24 seconds after the fault, the outage cascaded through tripping of small generators near Boise plus tripping of the 230 kV "Amps line" from western Montana to SE Idaho. Then voltage collapsed rapidly in southern Idaho and — helped by false trips of 3 units at McNary — at the north end of the PACI. Within a few seconds the western power system was fragmented into five islands, with most of southern Idaho blacked out.

On the following day, the President of the United States directed the Secretary of Energy to provide a report that would commence with technical matters but work to a conclusion that "Assesses the adequacy of existing North American electric reliability systems and makes recommendations for any operational or regulatory changes." The Report was delivered on August 2, just eight days before the even greater breakup of August 10 1996. The July 2 Report provides a very useful summary framework for the many analyses and reports that have followed since.

- WSCC "Near Miss:" July 3, 1996 [,]

Conditions on July3 were generally similar to those of July 2, but with somewhat less stress on the network. BPA’s AC transfer limits to California had been curtailed (to 4000 MW instead of 4800 MW), and resumed operation of the Brownlee #5 generator improved Idaho voltage support. The arc of July 2 recurred — apparently to the same tree — and the same faulty relay lead to the same protective actions at the Jim Bridger plant. Plant operators added to the ensuing voltage decline by reducing reactive output from the Brownlee #5 generator. System operators, however, successfully arrested the decline by dropping 600 MW of customer load in the Boise area. The troublesome tree was removed on July 5.

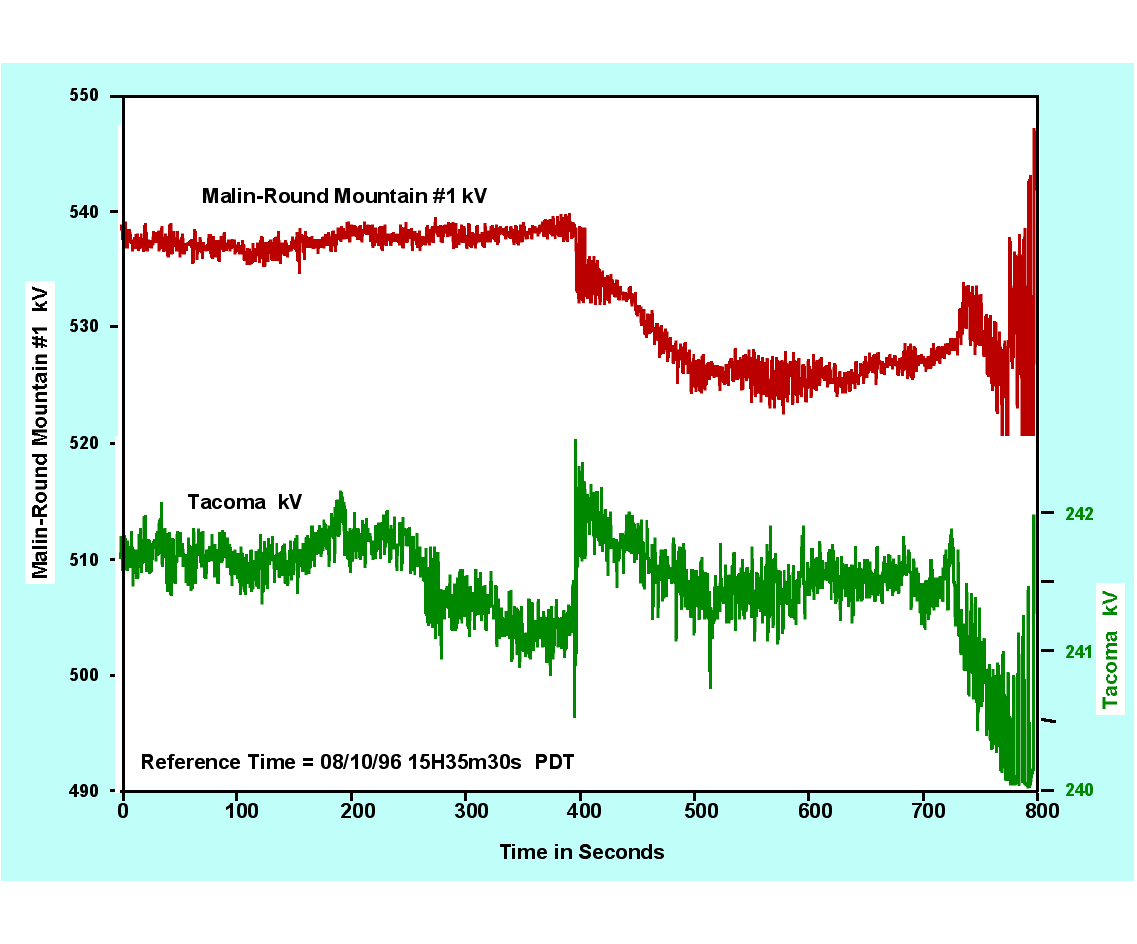

- WSCC Breakup: August 10, 1996 [, ,,]

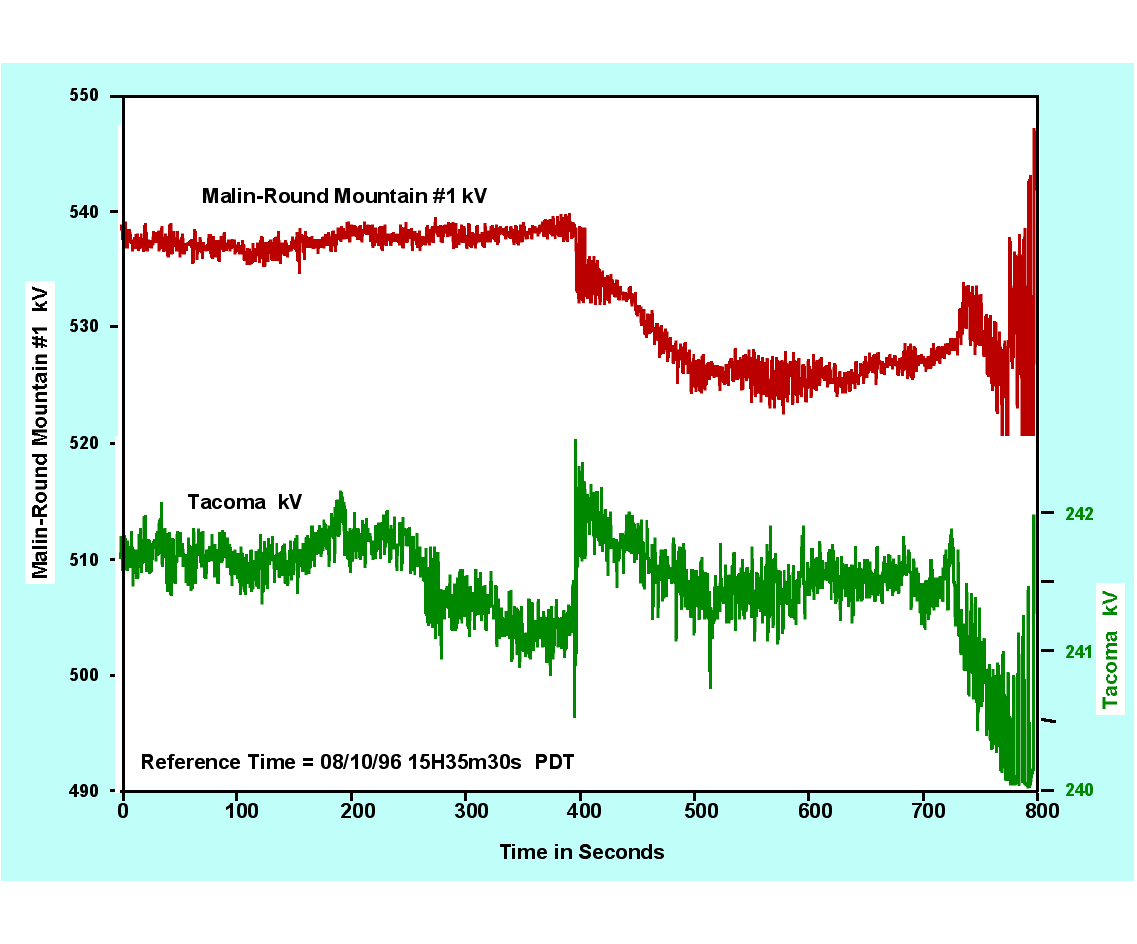

Temperatures and loads were somewhat higher than on July 2. Northwest water supplies were still abundant — unusual for August — and the import from Canada had increased to about 2300 MW. The July 2 environmental constraints on lower Columbia River generation were still in effect, reducing voltage and inertial support at the north ends of the PACI and PDCI. Over the course of several hours, arcs to trees progressively tripped a number of 500 kV lines near Portland, and further weakened voltage support in the lower Columbia River area. This weakening was compounded by a maintenance outage of the transformer that connects a static VAR compensator in Portland to the main 500 kV grid.

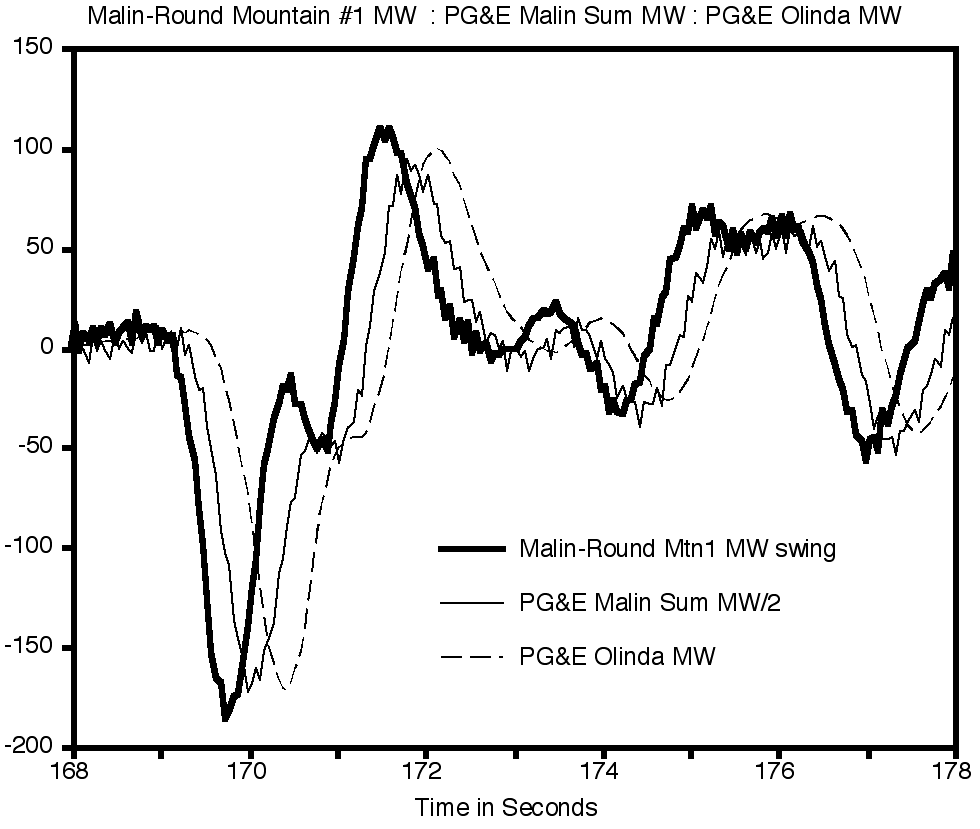

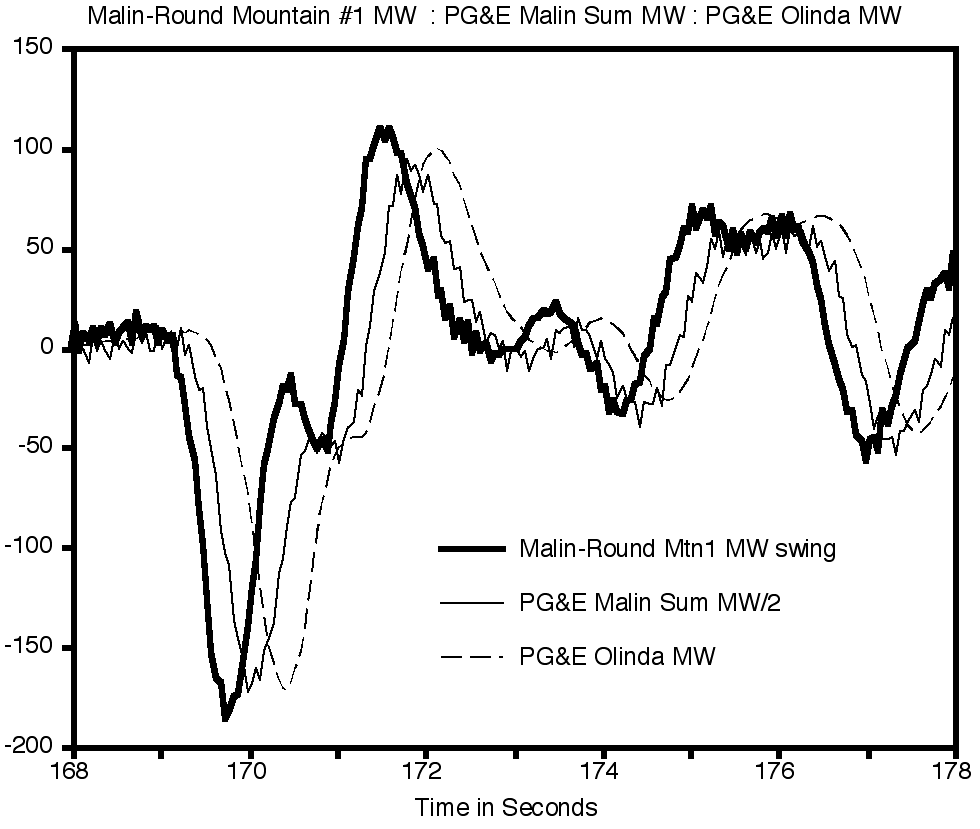

The critical line trip occurred at 13:42 p.m., with loss of a 500 kV line (Keeler-Allston) carrying power from the Seattle area to Portland. Much of that power then detoured from Seattle to Hanford (in eastern Washington) and then to the Portland area, twice crossing the Cascade Mountains. The electrical distance from the Canada generation to Southwest load was then even longer than just before the July 2 breakup, and the north-south transmission corridor was stretched to the edge of oscillatory instability. Near Hanford, the McNary plant became critical for countering a regional voltage depression but was hard pressed to do so. Three smaller plants near McNary might have assisted but were not controlled for this. Strong hints of incipient oscillations spread throughout the northern half of the power system.

Final blows came at 15:47:36. The heavily loaded Ross-Lexington 230 kV line (near Portland) was lost through yet another tree fault. At 15:47:37, the defective relays that erroneously tripped McNary generators on July 2 struck again. This time the relays progressively tripped all 13 of the units operating there. Governors and the automatic generation control (AGC) system attempted to make up this lost power by increasing generation north of the cross-Cascades detour. Growing oscillations — perhaps aggravated by controls on the PDCI [] — produced voltage swings that severed the PACI at 15:48:52. The outage quickly cascaded through the western system, fracturing it into four islands and interrupting services to some 7.5 million customers.

One unusual aspect of this event was that the Northeast-Southeast Separation Scheme, for controlled islanding under emergency conditions, had been removed from service. As a result the islanding that did occur was delayed, random, and probably more violent than would have otherwise been the case. Other unusual aspects include the massive loss of internal generation within areas that were importing power (e.g., were already generation deficient) and the damage to equipment. Some large thermal and nuclear plants remained out of service for several days.

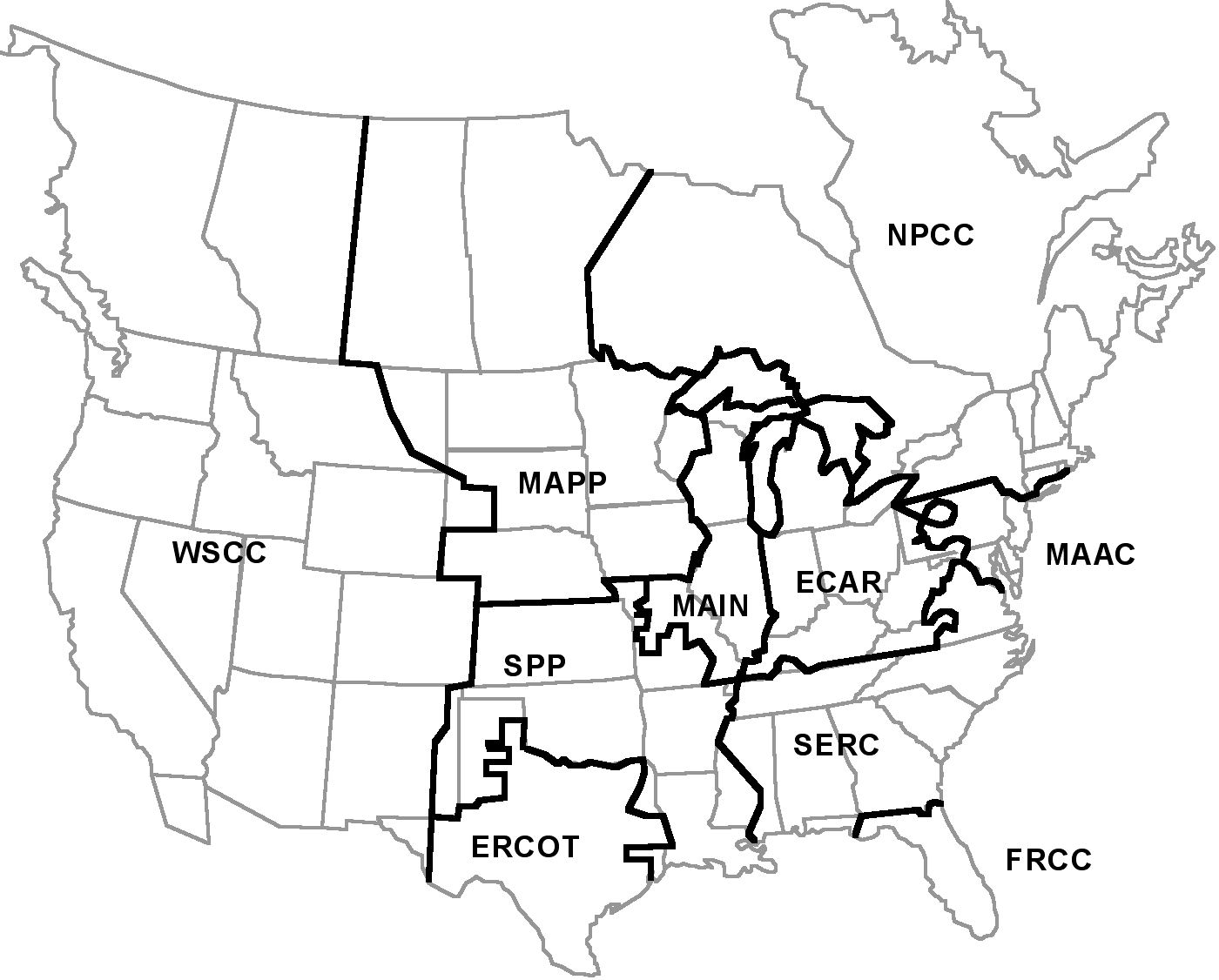

- Minnesota-Wisconsin Separation and "Near Miss:" June 11-12, 1997 [,]

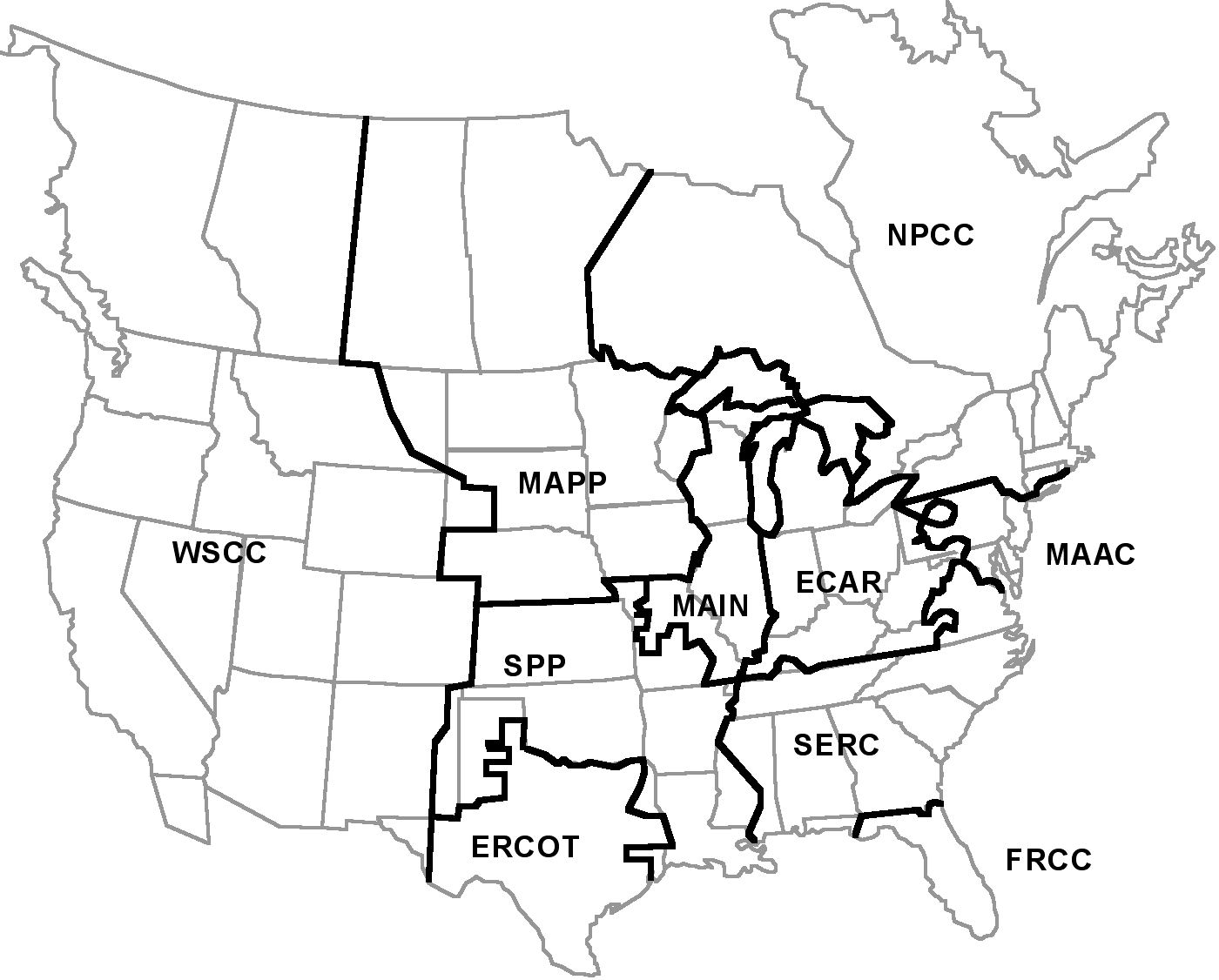

This event started with heavy flows of power from western MAPP and Manitoba Hydro eastward into MAIN and southward into SPP (see Fig. 2). Partly a commercial transport of lower cost power, the eastward flow was also needed to offset generation shortages in MAIN.

Fig. 2. Geography and Regional Reliability Councils for the North America power system (courtesy of B. Buehring, Argonne National Laboratory)

The event started shortly after midnight, when the 345 kV King-Eau Claire-Arpin-Rocky Run-North Appleton line from Minneapolis — St. Paul into Wisconsin opened at Rocky Run. Apparently this was caused by a relay that acted below its current setting, due to unbalanced loads or to a dc offset. This led to a protracted loss of the Eau Claire — Arpin 345 kV line, which could not be reclosed because of the large phase angle across the open breaker at Arpin. This produced a voltage depression across SW Wisconsin, eastern Iowa, and NE Illinois plus heavily loading of the remaining grid. Regional operators maneuvered their generation to relieve the situation and, some two hours later, the line was successfully reclosed. Later analysis indicates that the MAPP system "came within a few megawatts of a system separation," which might well have blacked out a considerable area []

- MAPP Breakup: June 25, 1998 [,]

This event started under conditions that were similar to those for June 11, 1997. Power flows from western MAPP and Manitoba Hydro into MAIN and SPP were heavy but within established limits. There was also a severe thunderstorm in progress, moving eastward across the Minneapolis—St. Paul area.

The initiating event occurred at 01:34 a.m., when a lightning stroke opened the 345 kV Prairie Island — Byron line from Minneapolis—St. Paul into Iowa and St. Louis. Immediate attempts to reclose this line failed due to excessive phase angle. As for the June 11 event, the operators then undertook to reduce the line angle by maneuvering generation. Another major event occurred before the line was restored, however. At 02:18 a.m., the storm produced a lightning stroke that opened the heavily loaded King-Eau Claire 345 kV line toward Wisconsin and Chicago. A cascading outage then rippled through the MAPP system, separating all of the northern MAPP system from the eastern interconnection and progressively breaking it into three islands. The records show both out-of-step oscillations between asynchronous regions of the system, and other oscillations that may not be explained as yet. Apparently there were also some problems with supplemental damping controls on the two HVDC lines from N. Dakota into Minnesota. The separated area spanned large portions of Montana, North Dakota, South Dakota, Minnesota, Wisconsin, Manitoba, Saskatchewan, and northwest Ontario.

The length of time between these two "contingencies" — some 44 minutes — is important. NERC operating criteria state that recovery from the first contingency should have taken place within 30 minutes (either through reduced line loadings or by reclosing the open line). MAPP criteria in effect at the time (and since replaced by those of NERC) allowed only 10 minutes. Criteria are not resources, though, and the operators simply lacked the tools that the situation required. Apparently they had brought the line angle within one or two degrees of the (hardwired) 40° closure limit, and a manual override of this limit would have been fully warranted. There were no provisions for doing this, however, so they were forced to work through a Line Loading Relief (LLR) procedure that had not yet matured enough to serve to the needs of the day. Other sources indicate that major improvements have been make since.

Though modeling results are not presented, the Report for this breakup is otherwise very comprehensive and exceptionally informative. As a measure for the complexity of this breakup, the Report states that "WAPA indicated that their SCADA system recorded approximately 10,000 events, alarms, status changes, and telemetered limit excursions during the disturbance." The Report then mentions some loss of communications and of SCADA information, apparently through data overload.

The Report also states that "The Minnesota Power dynamic system monitors which have accurate frequency transducers and GPS time synchronization were invaluable in analyzing this disturbance and identifying the correct sequence of events in many instances," even though recording was piecemeal and overall monitor coverage for the system was quite sparse. These insights closely parallel those derived from WSCC disturbances.

- NPCC Ice Storm: January 5-10, 1998 []

During this period a series of exceptionally severe ice storms struck large areas within New York, New England, Ontario, Quebec, and the Maritimes. Freezing rains deposited ice ranging in thickness to 3 inches, and were the worst ever recorded in that region. Resulting damage to transmission and distribution was characterized as severe (more than 770 towers collapsed).

This event underscores some challenging questions as to how, and how expensively, physical structures should be reinforced against rare meteorological conditions. It also raises some difficult questions as to how utilities should plan for and deal with multiple contingencies that are causally linked (not statistically independent random events).

The main lessons, though, deal with system restoration. Emergency preparedness, cooperative arrangements among utilities and with civil authorities, integrated access to detailed outage information, and an innovative approach to field repairs were all found to be particularly valuable. The disturbance report mentions that information from remotely accessible microprocessor based fault locator relays was instrumental in quickly identifying and locating problems. Implied in the report is that the restoration strategy amounted to a "stochastic game" in which some risks were taken in order to make maximum service improvements in least time — and with imperfect information on system capability.

- San Francisco Tripoff: December 8, 1998 []

Initial reports indicate that this event occurred when a maintenance crew at the San Mateo substation re-energized a 115 kV bus section with the protective grounds still in place. Unfortunately, the local substation operator had not yet engaged the associated differential relaying that would have isolated and cleared just the affected section. Other relays then tripped all five lines to the bus, triggering the loss of at least twelve substations and all power plants in the service area (402 MW vs. a total load of 775 MW). Restoration was hampered by blackstart problems, and by poor coordination with the California ISO.

Geography contributed to this event. Since San Francisco occupies a densely developed peninsula, the present energy corridors into it are limited and it would be difficult to add new ones. It is very nearly a radial load, and thereby quite vulnerable to failures at the few points where it connects to the main grid. The situation is well known, and many planning engineers have hoped for at least one more transmission line or cable into the San Francisco load area.

- "Price Spikes" in the Market

The new markets in electricity have experienced occasionally severe "price spikes" as a result of scarcity or congestion. The reliability implications of this are not clear. Some schools of thought hold that such prices provide a needed incentive to investment, and represent "the market at its best." Others suspect that, in some cases at least, the scarcity or congestion have been deliberately produced in order to drive prices up. In either event, the price spikes themselves may well be indicators for marginal reliability. These matters will be examined more closely in other elements of the CERTS effort.

- The Hot Summer of 1999

Analogous to the winter ice storms of 1998, protracted "heat storms" struck much of eastern North America during the summer of 1999 [,,]. Several of these were unusual in respect to their timing, temperature, humidity, duration, and geographical extent. Past records for electrical load were broken, and broken again. Voltage reductions and interruption of managed loads were useful but not sufficient in dealing with the high demand for electrical services. News releases reported heat related deaths in Chicago, outages in New York City, and rolling blackouts in many regions.

Unlike the other reliability events already considered here, these particular incidents did not involve significant disturbances to the main transmission grid. One of most conspicuous factors was heat-induced failure of aging distribution facilities, especially in the highly urbanized sections of Chicago [] and New York City []. Sustained hot weather also caused many generators to perform less well than expected, and it lead to sporadic generator outages through a variety of indirect mechanisms.

The most conspicuous problem was a very fundamental one. For many different reasons, a number of regions were confronted — simultaneously — by a shortfall in energy resources. Operators in some systems saw strong but unexpected indications of voltage collapse. The general weakening of the transmission grid hampered long distance energy transfers from areas where extra generation capacity did exist, and it severely tested the still new emergency powers of the central grid operators. These events have raised some very pointed questions as to what constitutes adequate electrical resources, and whether the new market structures can assure them. These matters are now being addressed by the DOE, NERC, EPRI, and various organizations. DOE Energy Secretary Richardson has established a Post-Outage Study Team (POST) for this purpose [].

The 1999 heat storm events also point toward various technical problems:

- Forecasting. Historical experience and short-term weather forecasts underestimated both the severity of the hot weather and the degree to which it would increase system loads.

- Planning practices.

- The widespread weather problems, compounded by complexities of the new market, produced patterns of operation that system planners had not been able to fully assess in advance.

- Market pricing encourages increased reliance upon very remote generation. Energy production and transport are now vulnerable to new contingencies far outside the service area.

- Planning models displayed several weaknesses:

- The proximity of system voltage collapse seems to have been rather greater than models indicated. Simplified representation of air conditioning loads is believed to be a factor in this.

- Generator capabilities and performance were less than models indicated. This is especially noticeable for gas fired combustion turbines, which also seem to self-protect more readily than models indicate.

- The overall impact of hot weather on forced outage rates seems to have been underestimated.

- In real time, some operators lacked resources that would have let them anticipate and/or manage the emergencies better. Newer technologies for monitoring of cable systems would have been very helpful [].

- Better recordings for operational data would have facilitated after-the-fact improvements in system planning, and to the system itself.

- Maintenance scheduling. Several of these events occurred while key generation was undergoing scheduled maintenance between seasonal load peaks, or during a forced extension of such maintenance.

- Maintenance practices raise difficult issues in risk management. The maintenance process itself can pose a threat to the system, both by removing facilities from service and by sometimes damaging those facilities. Some newer types of high power distribution cables seem especially vulnerable to this.

Counterparts to these technical problems have already been seen in the main-grid reliability events of earlier Sections, for systems further west. They have also been seen in earlier reliability events in the same areas. Many technical aspects of the June 1999 heat storm [] experienced by the Northeast Power Coordinating Council (NPCC) are remarkably similar to those of the cold weather emergency there in January 1994 [].

The 1999 heat storm events offer many contrasting performance examples for grid operation systems and regional markets during emergency conditions. Performance of the NPCC and its member control areas during the generation shortage of June 7-8 seems notably good. Had this not been so, the emergency could have been devastating to highly populated regions of the U.S. and Canada. That this did not happen is due to exemplary coordination among NPCC and the member control areas, within the framework of a relatively young market that is still adapting to new rules and expectations. The information from this experience should be valuable to regions of North America where deregulation and restructuring are less advanced.

- The Aftermath of Major Disturbances

The aftermath of a major disturbance can be a period of considerable trial to the utilities involved. Their response to it can be a major challenge to their technical assets, and to the reliability council through which they coordinate the work. The quality of that response may also be the primary determinant for immediate and longer-term costs of the disturbance.

Most immediately, there is the matter of system restoration (electrical services and system facilities). This will almost certainly involve an engineering review, both to understand the event and to identify countermeasures. Such countermeasures may well involve revised procedures for planning and operation, selective de-rating of critical equipment, and installation of new equipment. The engineering review may also factor into high level policies concerning the balance between the cost and the reliability of electrical services.

If restoration proceeds smoothly and promptly then the immediate costs of the disturbance will be comparatively modest. These costs may rise sharply as an outage becomes more protracted, however. There is an increased chance that abnormally loaded equipment must either sustain damage or protect itself by tripping off. (This is the classic mechanism by which a small outage cascades into a large one.) Some remaining generation may just deplete their reserves of fuel or stored energy. Also, loads that have already lost power differ in their tolerance for outage duration. Spoilage of refrigerated food, freezing of molten metals, and progressive congestion of transportation systems are well known examples of this.

In most cases electrical services are restored within minutes to a few hours at most. Full restoration of system facilities to their original capability may require repairs to equipment that was damaged during the outage itself, or during services restoration. The 1994 earthquake and the New York City blackout of 1977 demonstrate how extensive these types of damage may be. Long-term costs of an outage accumulate during the repair period, and the repairs themselves may be much less expensive than those of new operating constraints for the weakened system.

Full repairs do not necessarily lead to full operation. New and more conservative limits may be imposed in light of the engineering review, or as a consequence of new policies. To an increasing extent, curtailed operation may also result from litigation or the fear of it [,,]. This consideration is antithetical to the candid exchange of technical information that is necessary to the engineering review process, and to an effective reliability council based upon voluntary cooperation among its members.

- Recurring Factors in North American Outages

The outages described in this Section span a period of more than thirty years. Even so, certain contributing factors recur throughout these summary descriptions and the more detailed descriptions that underlie them. There are ubiquitous problems with

- Protective controls (relays and relay coordination)

- Unexpected or unknown circumstances

- Understanding and awareness of power system phenomena (esp. voltage collapse)

- Feedback controls (PSS, HVDC, AGC)

- Maintenance

- "Operator error"

The more important technical elements that these problems reflect are discussed below, and in later Sections. Human error is not listed, simply because — at some remove — it underlies all of the problems shown.

Disturbance reports and engineering reviews frequently state that some particular system or device "performed as designed" — even when that design was clearly inappropriate to the circumstances. Somewhere, prior to this narrowly defined design process, there was an error that led to the wrong design requirements. It may have been in technical analysis, in the general objectives, or in resources allocation — but it was a human error, and embedded in the planning process [,].

- Protective Controls — Relays

Disturbance reports commonly cite relay misoperation as the initiator or propagator of a system disturbance. Sometimes this is traced to nothing more than neglected maintenance, obsolescent technology, or an inappropriate class of relay. More often, though, the offending relay has been "instructed" improperly, with settings and "intelligence" that do not match the present range of operating conditions. There have been many problems with relays that "overreach" in their extrapolation of local measurements to distant locations.

Proper tools and appropriate policies for relay maintenance are important issues. More important, though, is the "mission objective" for those relays that are critical to system integrity. Most relays are intended to protect local equipment. This is consistent with the immediate interests of the equipment owner, and with the rather good rationale that intact equipment can resume service much earlier than damaged equipment.

But, arguing against this, there have been numerous instances where overly cautious local protection has contributed to a cascading outage. Deferred tripping of critical facilities may join the list of ancillary services for which the facilities owner must be compensated [].

- Protective Controls — Relay Coordination

Containing a sizeable disturbance will usually require appropriate action by several relays. There are several ways to seek the needed coordination among these relays. The usual approach is to simulate the "worst case" disturbances and then set the relays accordingly. Communication among the relays is indirect, through the power system itself. The quality of the coordination is determined by that of the simulation models, and by the foresight of the planners who use them.

In the next level of sophistication of relay coordination, some relays transmit "transfer trip" signals to other relays when they recognize a "target." Such signals can be used to either initiate or block actions by the relays that receive them. Embellished with supervisory controls and other "intelligence," the resulting network can be evolved into a wide area control system of a sort used very successfully in the western power system and throughout the world.

Direct communication among relays makes their coordination more reliable — in a hardware sense — but correctness of the design itself must still be addressed. Apparently there are difficulties with this, both a-priori and in retrospect. Relays, like transducers and feedback controllers, are signal processing devices that have their own dynamics and their own modes of failure. Some relays sense conditions (like phase imbalance or boiler pressure) that power system planners cannot readily model. Overall, the engineering tools for coordinating wide area relay systems seem rather sparse.

Beyond all this, it is also apparent that large power systems are sometimes operated in ways that were not foreseen when relay settings were established. It is not at all apparent that fixed relay settings can properly accommodate the increasingly busy market or, worse yet, the sort of islanding that has been seen recently in North America. It may well be that relay based controls, like feedback controls, will need some form of parameter scheduling to cope with such variability. The necessary communications could prove highly attractive to information attack, however, and precautions against this growing threat would be mandatory [,].

Several of the events suggest that there are still some questions to be resolved in the basic strategy of bus protective systems, or perhaps in their economics. In the breakup of December 14, 1994, it appears that "bus geometry" forced an otherwise unnecessary line trip at Borah and lead directly to the subsequent breakup. In the San Francisco tripoff of December 8, 1998, a bus fault there tripped all lines to the San Mateo bus because the differential relay system had not been fully restored to service. An "expert system" might have advised the operator of this condition, and perhaps even performed an impedance check on the equipment to be energized.

- Unexpected Circumstances

Nearly two decades ago, at a panel session on power system operation, it was stated that major disturbances on the eastern North America system generally occurred with something like six major facilities already out of service (usually for maintenance). The speaker then raised the question "What utility ever studies N-6 operation of the system?"

This pattern is very apparent in the events described above, and in many other disturbances of lesser impact. WSCC response, in the wake of the August 10 breakup, is an announced policy that "The system should only be operated under conditions that have been directly studied." Implicit in the dictum is that the studies should use methods and models known to be correct. Too often, that correctness is just take for granted.

One result of this policy is that many more studies must be performed and evaluated. To some extent, study results will affect maintenance scheduling and possibly delay it. Dealing with unscheduled outages, of the sort that occur incessantly in a large system, is made more difficult just by the high number of combinations that must be anticipated. The best approach may be to narrow the range of combinations by shortening the planning horizon. This would necessarily call for powerful simulation tools, with access to projected system conditions and with special "intelligence" to assist in security assessment.

In the limit, such tools for security assessment would draw near-real-time information from both measurements and models taken from system itself. They might also provide input to higher level tools, for reliability management, that advise the future grid operator in his continual balancing of system reliability against system performance.

- Circumstances Unknown to System Operators

There are many instances where system operators might have averted some major disturbance if they had been more aware of system conditions. An early case of this can be found in the 1965 Northeast Blackout, when operators unknowingly operated above the unnecessarily conservative thresholds of key relays. More recently, just prior to the August 10 breakup, it is possible that some utilities would have reshaped their generation and/or transmission had they known that so many lines were out of service in the Portland area. The emerging Interregional Security Network, plus various new arrangements for exchange of network loading data, are improving this aspect of the information environment.

Operator knowledge of system conditions may be of even greater value during restoration. The alacrity and smoothness of system restoration are prime determinators of disturbance cost, and the operators are of course racing to brace the system against whatever contingency may follow next. Restoration efforts following the 1998 NPCC Ice Storm and the 1997-1998 MAPP events seem typical of recent experience. The need for integrated information and "intelligent" restoration aids is apparent, and the status of relevant technology should be determined. Analogous problems exist for load management during emergencies at distribution level [].

In the past, it has commonly happened that critical system information was available to some operators but not to those who most needed it. Inter-utility sharing of SCADA data, together with inclusion of more data and data types within SCADA, have considerably improved this aspect of the problem. The new bottleneck is "data overload" — information that is deeply buried in the data set is still not available to the operators, or to technical staff.

Alarm processing has received considerable attention over the years, but continual improvements will be needed (note the 10,000 SCADA events recorded by WAPA for the 1998 MAPP breakup). Alarm generation itself is an important topic. The August 10 Breakup demonstrates the need for tools that dig more deeply into system data, searching out warning signs of pending trouble. (The potential for this is shown in a later Section.) Such tools are also needed in the security assessment and reliability management processes.

Information shortfalls can also be a serious and expensive handicap to the engineering review that follows a major disturbance. Much of this review draws upon operating records collected from many types of device (not just SCADA). At present the integration of such records is done as a manual effort that is both ad hoc and very laborious. Data is contributed voluntarily by many utilities, in many dissimilar formats. For cascading outages like those in 1996, the chance that essential data will be lost from the recording system — or lost in the recording system — are quite substantial. The following examples are instructive in this respect:

- Loss of the "Amps line," from western Montana into southeastern Idaho, was a decisive event in the WSCC breakup of July 2, 1996. The engineering team reviewing the event did not discover that this line had been lost until some 20 days after the breakup, however. In the meanwhile, lead-time and critical engineering resources were expended in a struggle with the wrong problem.

- Loss of generation was a decisive causative factor in the August 10 breakup. The list of generators actually lost was still incomplete three months later.

- The best analyses to date indicate that the performance of feedback controls in the Pacific Southwest was another decisive factor in the August 10 breakup. Surviving records of this performance are fragmented at best, and it is rumored that many of the records taken were overstored or otherwise lost.

Countermeasures to such problems are discussed further in [,,]. Chief among these are a system-wide information manager that assures reliable data retention and access, and an associative data miner for extracting pertinent information from the various data bases. It is assumed that these would include text files (operator logs and technical reports) as well as numerical data.

- Understanding Power System Phenomena

There is a tendency to underestimate the complexity of behavior that a large power system can exhibit. As a system increases in size, or is interconnected with other systems nearby, it may acquire unexpected or pathological characteristics not found in smaller systems []. These characteristics may be intermittent, and they may be further complicated by subtle interactions among control systems or other devices [,,]. This is an area of continuing research, both at the theoretical level and in the direct assessment of observed system behavior.

Some phenomena are poorly understood even when the underlying physics is simple. Slow voltage collapse is an insidious example of this [,,,] and there are numerous accounts of perplexed operators struggling in vain to rescue a system that was slowly working its way toward catastrophic failure. The successful actions taken on July 3 show that the need for prompt load dropping has been recognized, and recent WSCC breakups demonstrate the value of automatic load shedding thorough relay action. Even so, on August 10 the BPA operators were not sufficiently aware that their reactive reserves had been depleted, they had few tools to assess those reserves, and load shedding controls were not in wide use outside the BPA service area.

Large scale oscillations can be another source of puzzlement, to operators and planners alike. Observations observed in the field may originate from nonlinear phenomena, such as frequency differences between asynchronous islands or interactions with saturated devices []. It is very unlikely that any pre-existing model will replicate such oscillations, and it is quite possible that operating records will not even identify the conditions or the equipment that produced them. Situations of this kind can readily escalate from operational problems into serious research projects.

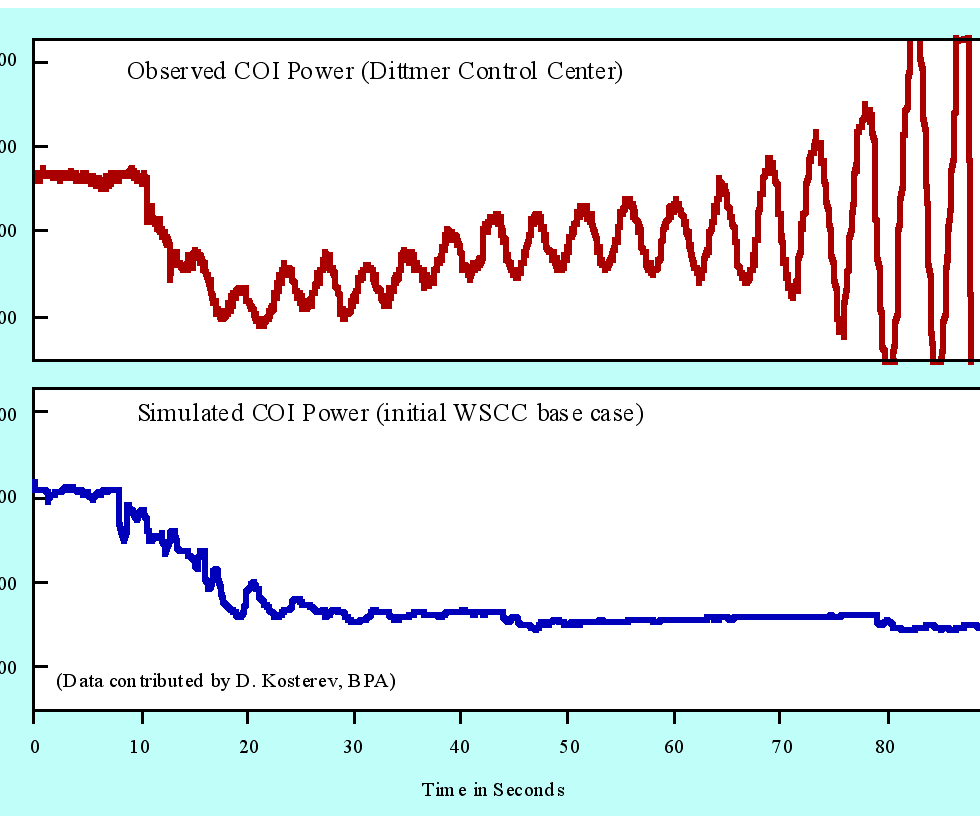

Similar problems arise even for the apparently straightforward linear oscillations between groups of electrical generators. WSCC planning models have been chronically unrealistic in their representation of oscillatory dynamics, and have progressively biased the engineering judgement that underlies the planning process and the allocation of operational resources. Somewhere, along the way to the August 10 breakup, the caveats associated with high imports from Canada were forgotten. One partial result of this is that both planners and operators there have been using just computer models, and time-domain tools, to address what is fundamentally a frequency domain problem requiring information from the power system itself. Better tools — and better practices — would provide better insight.

Disabling of the north-south separation scheme suggests a lack of appreciation for the value of controlled islanding in a loosely connected power system.. Once they are in progress, the final line of defense against widespread oscillations is to cut one or more key interaction paths, and this is what controlled islanding would have done. Without this, on August 10 the western system tore itself apart along random boundaries, rather than achieving a clean break into predetermined and self sufficient islands. Future versions of the separation scheme should be closely integrated into primary control centers, where the information necessary for more advanced islanding logic is more readily available. Islanded operation should also be given more attention in system planning, and in the overall design of stability controls.

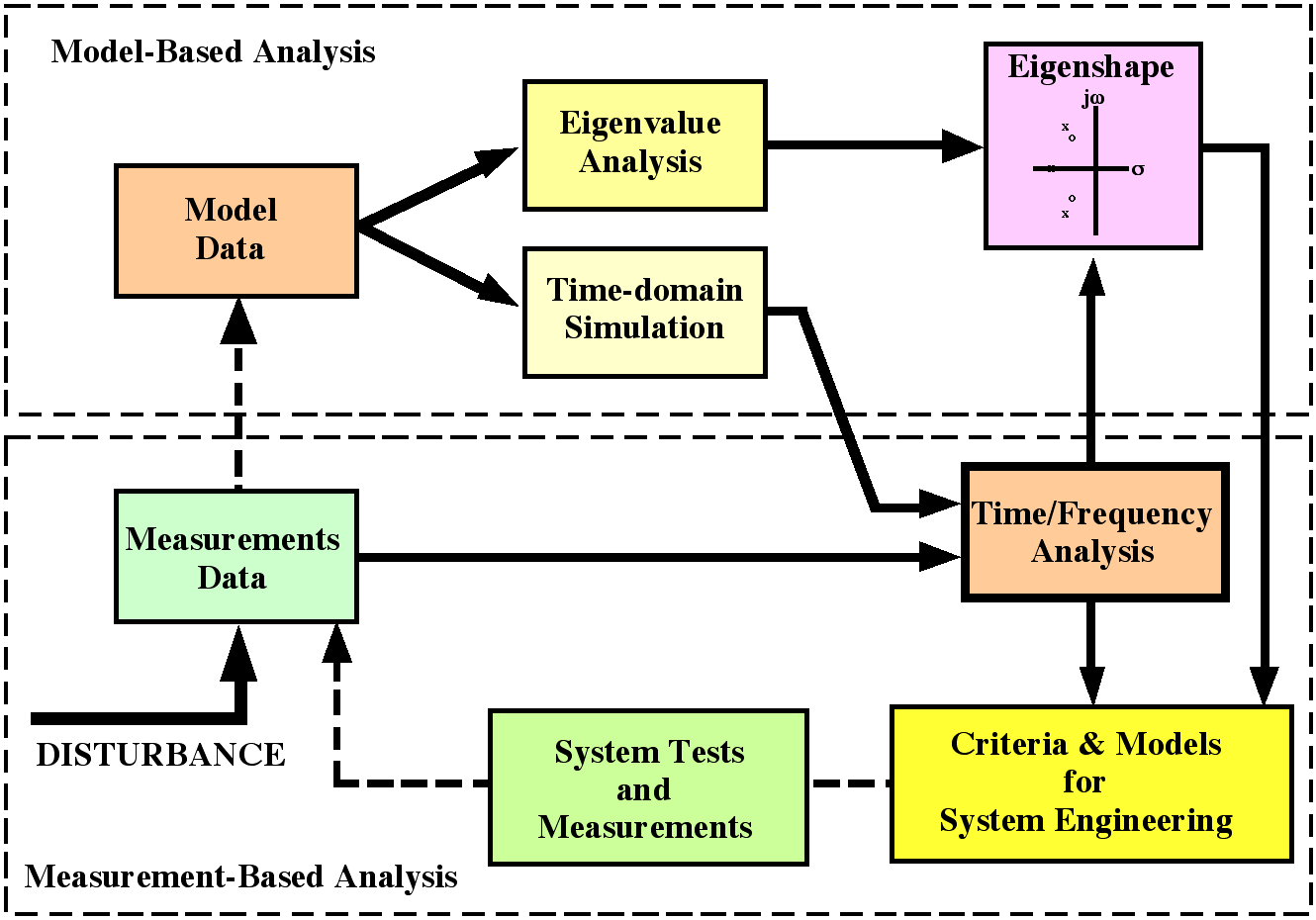

- Challenges in Feedback Control

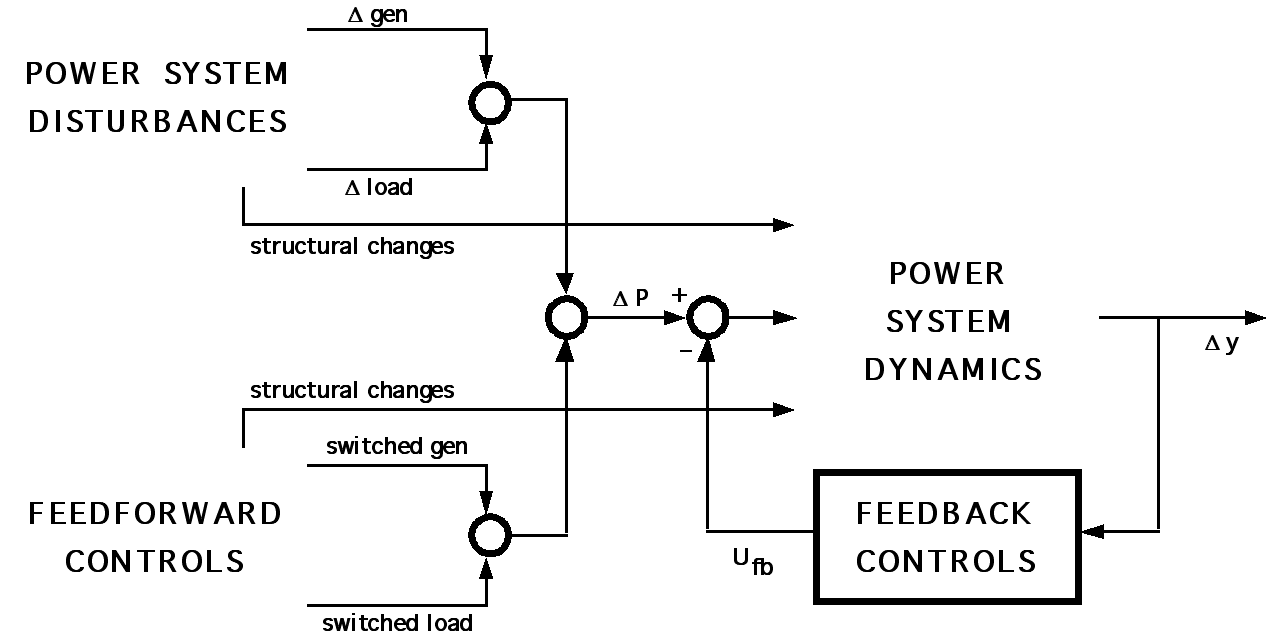

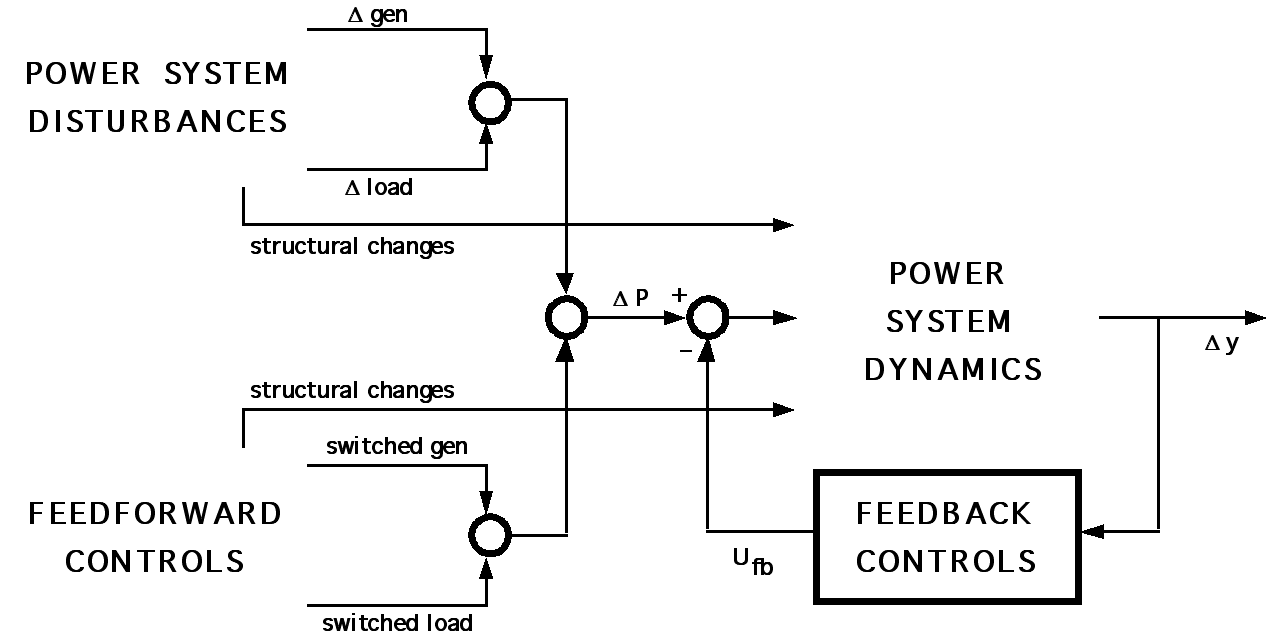

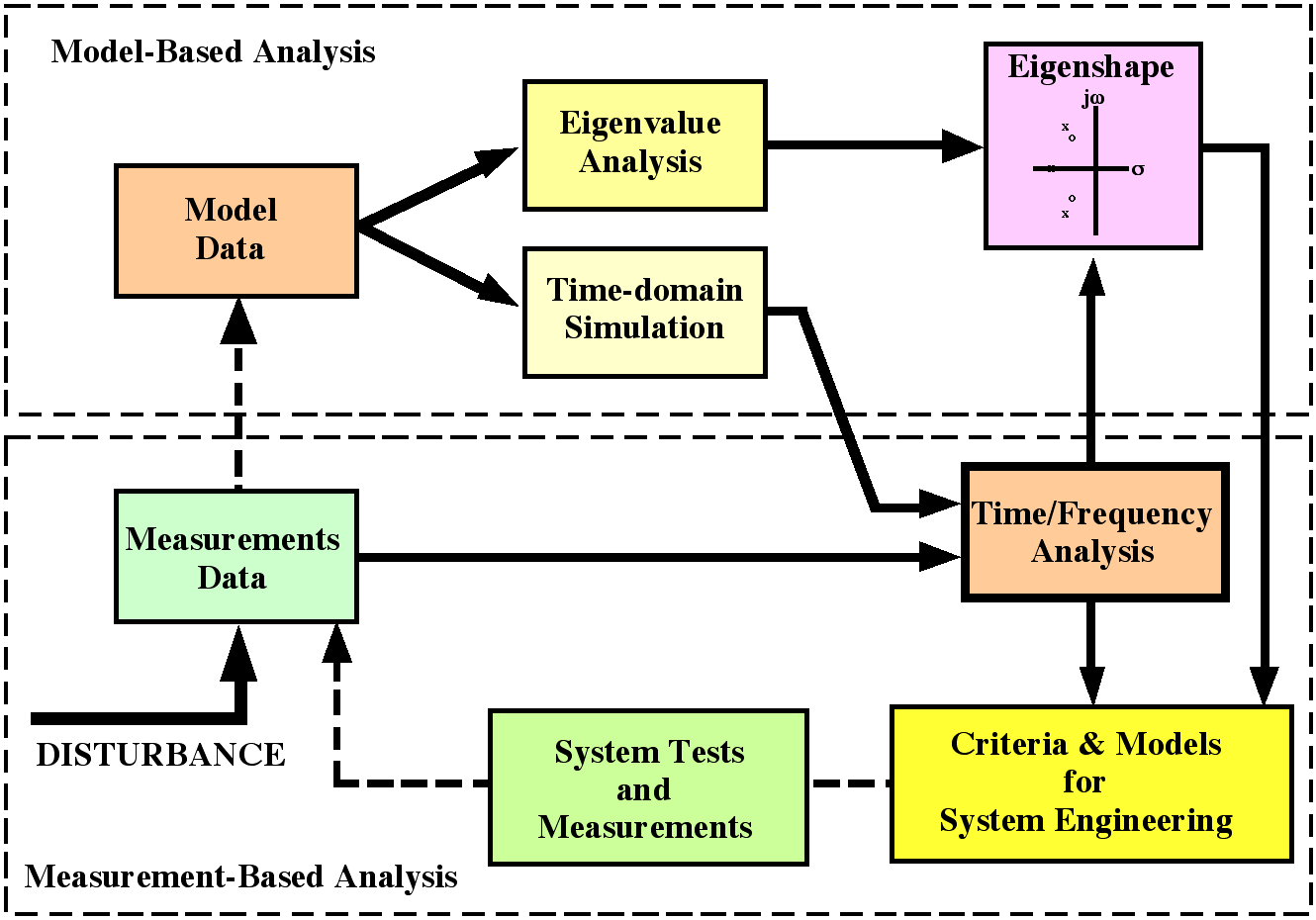

There are two types of stability control in a large power system. One of these uses "event driven" feedforward logic to seek a rough balance between generation and load, and the other refines that balance through "response driven" feedback logic. Fig. 3 indicates this relationship and the quantities involved.

Fig. 3. General structure of power system disturbance controls

The feedforward controls are generally rule based, following some discrete action when some particular condition or event is recognized. Typical control actions include coordinated tripping of multiple lines or generators, controlled islanding, and fast power changes on a HVDC line. Due to the prevalence of relay logic and breaker actuation, these are often regarded as special protective controls. Another widely used term is remedial action scheme, or RAS.

RAS control is usually armed, and is sometimes initiated, from some central location. Though this is not always the case, most RAS actuators are circuit breakers. Since this is a two-state device, the underlying hardware can draw upon relay technology, with communication links that are both simple and very reliable.

Feedback controls usually modulate some continuously adjustable quantity such as prime mover power, generator output voltage, or current through a power electronics device. Signals to and from the primary control logic are too complex for reliable long distance communication with established technologies. Newer technologies that may change this are gaining a foothold. At present, however, the established practice is to design and operate feedback controls on the basis of local signals only. As in the case of relays (Section 5.2), communication among such controllers is indirect and through the power system itself.

Some of the disturbance events demonstrate that this does not always provide adequate information. Particularly dramatic evidence of this was provided by vigorous cycling of the IPP HVDC line during the WSCC breakup of December 14, 1994. Less dramatic problems with coordination of HVDC controls might also be found in the August 10 Breakup and in the MAPP breakup of June 25, 1998.

The lesson in this is that wide area controls need wide area information. Topology information, or remote signals based upon topology, are the most reliable way to modify or suspend controller operation during really large disturbances (e.g., with islanding). Such information would also allow parameter scheduling for widely changing system conditions. Other kinds of supplemental information should be brought to the controller site for use in certification tests, or to detect adverse interactions between the controller and other equipment []. The information requirements of wide area control are generally underestimated, at considerable risk to the power system.

Though their cumulative effects are global to the entire power system, most feedback controls there are local to some generator or specific facility. Design of such controls has received much attention, and the related literature spans at least three decades. Despite this, the best technology for generator control is fairly recent and not widely used. Observations of gross system performance imply that, whatever the reason, stability support at the generator level has been declining over the years. EPRI’s 1992 report concerning slow frequency recovery [] is reinforced by the WSCC experience reported in [] and []. In the WSCC, ambient "swing" activity of the Canada-California mode has been conspicuous for decades and has progressively become more so. This strongly suggests that WSCC tuning procedures for power system stabilizer (PSS) units may not address this mode properly. Modeling studies commonly show that — under specific known circumstances — the stability contribution of some machines can be considerably improved. There are a lot of practical issues along the path from such findings to an operational reality, however.

Much or most of the observed decline in stability support by generator controls is attributed to operational practices rather than technical problems. It can be profitable to operate a plant very close to full capacity, with no controllable reserve to deal with system emergencies. Even when such reserves are retained it can still be profitable, or at least convenient, to obtain "smooth running" by changing or suspending some of the automatic controls. In past years the WSCC dealt with this through unannounced on-site inspections []. Engineering review of 1996 breakups argue that this was not sufficient. There must be some direct means by which the grid operator can verify that essential controller resources are actually available (and performing properly). Prior to this, it is essential that the providing of such resources be acceptable and attractive to the generation owners. Unobtrusive technology and proper financial compensation are major elements in this.

The emerging challenge is to make controller services as reliable as any other commercial product []. If this cannot be done then new loads must be served through new construction, or with less reliability.

- Maintenance Problems, RCM, and Intelligent Diagnosticians

Many of the outages suggest weaknesses in some aspect of system maintenance. Inadequate vegetation control along major transmission lines is an conspicuous example, made notorious by the 1996 breakups. There have also been occasional reports of things like corroded relays, and there are persistent indications that testing of relays in the field is neither as frequent nor as thorough as it should be. Apparently the relays that prematurely tripped McNary generation on August 10, 1996, had been scheduled for maintenance or replacement for some 18 months.

The utilities have expressed significant interest in new tools such as reliability centered maintenance (RCM) and its various relatives. A risk in this is that "maintenance just in time" can easily become "maintenance just too late." Some power engineers have expressed the view that preventive maintenance of any kind is becoming rare in some regions, and that the situation will not improve very much until utility restructuring is more nearly compete. There is not much incentive to perform expensive maintenance on an asset that may soon belong to someone else. Other engineers contest this assertion, or claim that such situations are not typical. The clear fact is that maintenance is a difficult but critical issue in corporate strategy.

The need for automated "diagnosticians" at the device level has been recognized for some years, and useful progress has been reported with the various technologies that are involved. These range from sensing of insulation defects in transformers through to generator condition monitors and self-checking logic in the "intelligent electronic devices" that are becoming ubiquitous at substation level. In the direct RCM framework we find browsers that examine operating and service records for indications that maintenance should be scheduled for some particular device or facility. Tracking such technologies is becoming difficult. The technologies themselves tend to be proprietary, and the associated investment decisions are business sensitive.

The need for automatic diagnosticians at system level is recognized, though not usually in these terms. Conceptualization of and progress toward such a product has been rather compartmentalized, with different institutions specializing in different areas. Real-time security assessment is perhaps the primary component for a diagnostician at this level. EPRI development of model based tools for this has shown considerable technical success (summarized in []), and the DOE/EPRI WAMS effort points the way toward complementary tools that are based upon real time measurements [-,,]. The latter effort has also shown the value of intelligent browser that would expedite full restoration of system services after a major system emergency. It seems likely that these various efforts will be drawn together under a Federal program in Critical Infrastructure Protection (CIP).

- "Operator Error"

This is a term that should be reserved for cases in which field personnel (who might not actually be system operators) do not act in accordance with established procedures. Such cases do indeed occur, with distressing frequency, and the effects can be very serious. The appropriate direct remedies for this are improved training, augmented by improved procedures with built-in cross checks that advise field personnel of errors before action is taken. Automatic tools for this can be useful, but — as shown by the balky reclosure system in the 1998 MAPP breakup — no robot should be given too much authority.

Deeper problems are at work when system operators take some inappropriate action as a result of poor information or erroneous instructions. (This may be an operational error for the utility, but it is not an operator’s error.) Sections 5.3 through 5.5 discuss aspects of this and point toward some useful technologies.

This technology set falls well short of a full solution. It will be a very long time before any set of simple recipes will anticipate all of the conditions that can arise in a large power system, especially if the underlying models are faulty. Proper operation is a responsibility shared between operations staff (who are not usually engineers) and technical staff (who usually are). Key operation centers should draw upon "collaborative technologies" to assure that technical staff support is available and efficiently used when needed, even though the supporting presonnel may be at various remote locations and normally working at other duties. Such resources would be of special importance to primary grid operators such as an ISO.

There is also a standing question as to how much discretionary authority should be given to system operators. Drawing upon direct experience, the operator is likely to have insights into system performance and capability that complement those of a system planner. In the past — prior to August 10 — the operators at some utilities were allowed substantial discretion to act upon that experience while dealing with small contingencies. Curtailing that discretion too much will remove a needed safety check on planning error.

- Special Lessons From Recent Outages — August 10, 1996

If we are fortunate, future students of such matters will see the WSCC Breakup of August 10 1996 as an interesting anomaly during the transition from a tightly regulated market in electricity to one that is regulated differently. The final hours and minutes leading to the breakup show a chain of unlikely events that would have been impossible to predict. Though not then recognized as such, these events were small "contingencies" that brought the system into a region of instability that WSCC planners had essentially forgotten. Indications of this condition were visible through much of the power system. Then, five minutes later, a final contingency struck and triggered one of the most massive breakups yet seen in North America.

Better information resources could have warned system operators of impending problems (Section 6.2), and better control resources might have avoided the final breakup or at least minimized its impact (Section 6.3). The finer details of these matters have not been fully resolved, and they many never be. The final message is a broader one.

All of the technical problems that the WSCC identified after the August 10 Breakup had already been reported to it in earlier years by technical work groups established for that purpose [,]. In accordance with their assigned missions, these work groups recommended to the WSCC general countermeasures that included and expanded upon those that were adopted after the August 10 Breakup. Development and deployment of information resources to better assess system performance was well underway prior to the breakup, but badly encumbered by shortages of funds and appropriate staff.

The protracted decline in planning resources that lead to the WSCC breakup of August 10, 1996 was and is a direct result of deregulatory forces. That decline has undercut the ability of that particular reliability council to fully perform its intended functions. Hopefully, such institutional weaknesses are a transitional phenomenon that will be remedied as a new generation of grid operators evolves and as the reliability organizations change to meet their expanding missions.

- Western System Oscillation Dynamics

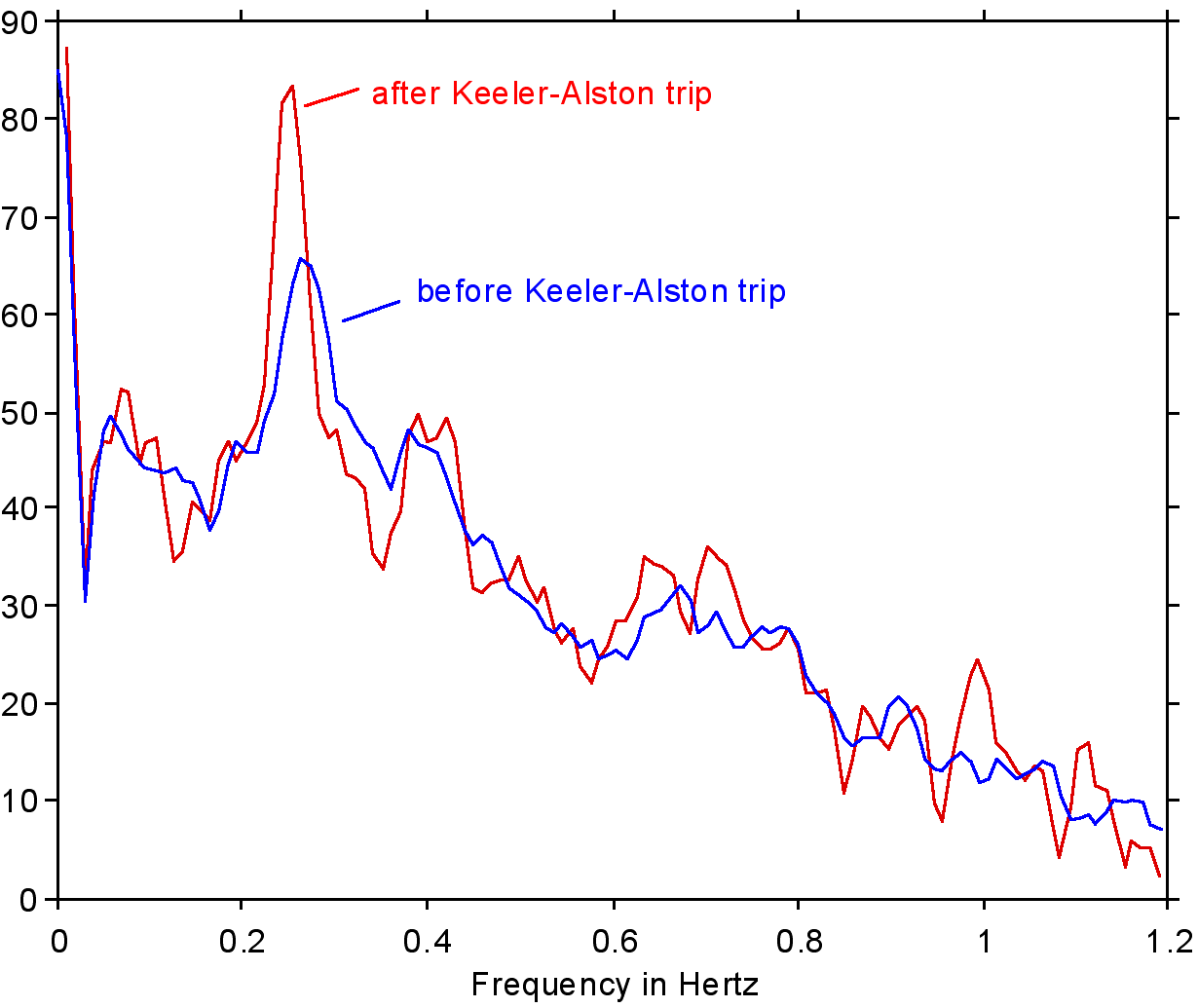

Understanding the WSCC breakups of 1996 requires some detailed knowledge of the oscillatory dynamics present in that system, and of the way that those dynamics respond to control action. This Section provides a brief summary of such matters.

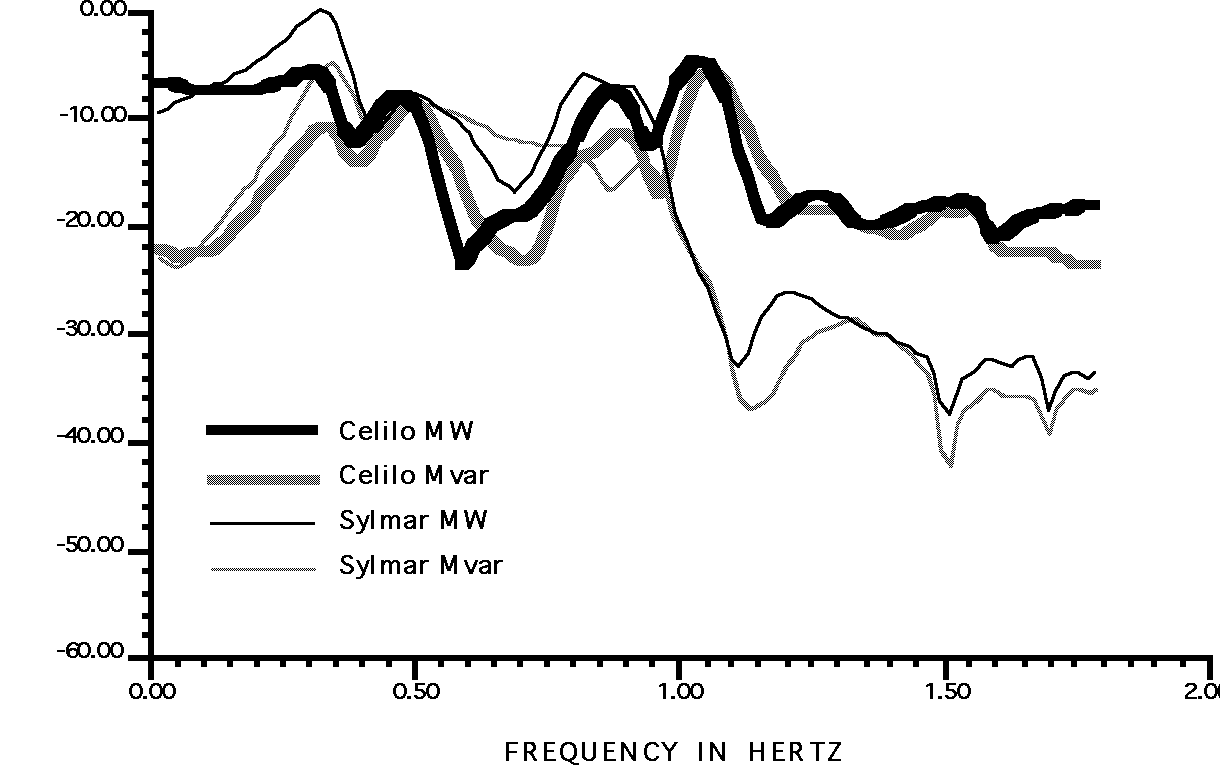

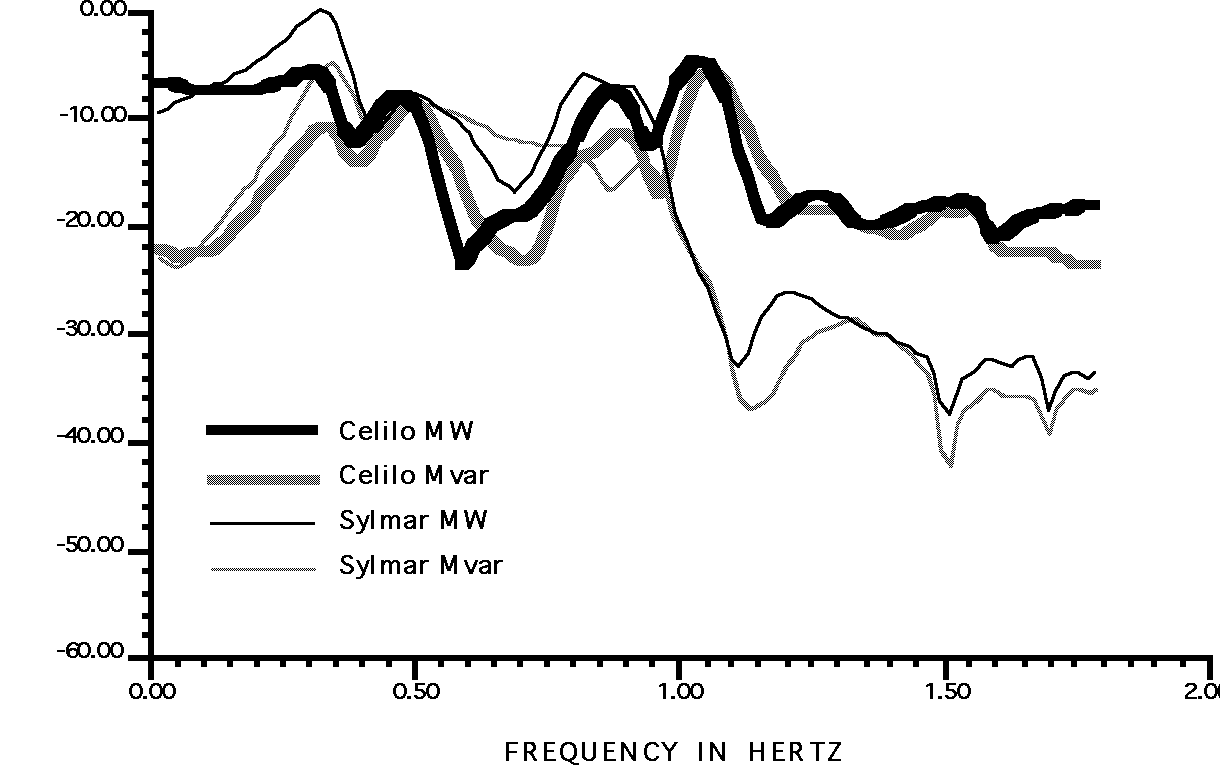

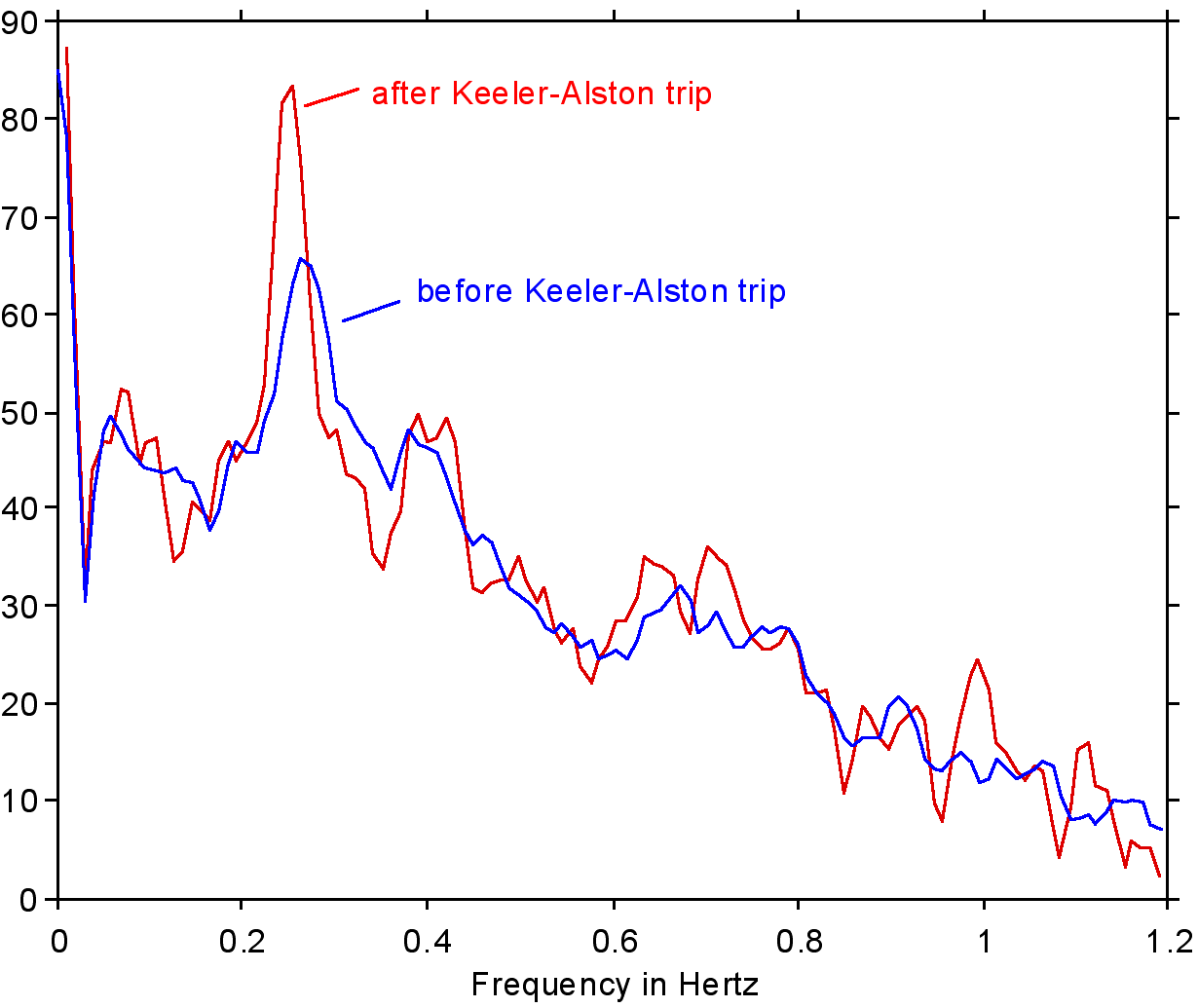

Fig. 4. Gain response of PACI line power to complex power injections at terminals of the PDCI

The more important interarea modes of the western power system are visible in Fig. 4. The data there show response of the Pacific AC Intertie to real and reactive power injections at the Celilo and the Sylmar terminals of the Pacific HVDC Intertie. These results were generated with a simulation model that had been calibrated against system disturbances of the early 1990’s, and seem realistic.

The figure supports the following observations:

- At 0.33 Hz: (the Canada — California, or "AC Intertie" mode)

- response to Sylmar MW is 6 dB (i.e., twice) stronger than that for any other injection. Changes in this would substantially affect response to PDCI real power modulation.

- response to Sylmar Mvar is strong, and can be expected to change substantially with Sylmar conditions.

- a reactive power device (such as an SVC) near Sylmar would have about the same "leverage" as a real power device (resistor brake or storage unit) near Celilo.

- At 0.45 Hz: (the Alberta mode)

- the response components are essentially the same for all injections.

- single-component modulation of an SVC, resistor brake, or storage unit would all be equally effective for damping of the associated mode, if located near Celilo or Sylmar.